The Intel SSD 660p SSD Review: QLC NAND Arrives For Consumer SSDs

by Billy Tallis on August 7, 2018 11:00 AM EST

When NAND flash memory was first used for general purpose storage in the earliest ancestors of modern SSDs, the memory cells were treated as simply binary, storing a single bit of data per cell by switching cells between one of two voltage states. Since then demand for higher capacity has pushed the industry to store more bits in each flash memory cell.

In the past year, the deployment of 64-layer 3D NAND flash has allowed almost all of the SSD industry to adopt three bit per cell TLC flash, which only a few short years ago was the cutting edge. Now, four bit per cell, also known as Quad-Level Cell (QLC) NAND flash, is the current frontier.

Each transition to storing more bits per memory cell comes with significant downsides that offset the appeal of higher storage density. The four bits per cell storage mode of QLC requires discriminating between 16 voltage levels in a flash memory cell. The process of reading and writing with adequate precision is unavoidably slower than accessing NAND flash that stores fewer bits per cell. The error rates are higher, so QLC-capable SSD controllers need very robust error correction capabilities. Data retention and write endurance are reduced.

But QLC NAND is entering a market where TLC NAND can provide more performance and endurance than most consumers really need. QLC NAND doesn't introduce any fundamentally new problems, it just is afflicted more severely with the challenges that have already been overcome by TLC NAND. The same strategies that are in widespread use to mitigate the downsides of TLC NAND are also usable for QLC NAND, but QLC will always be the cheaper lower-quality alternative to TLC NAND.

On the commercial product front, Micron introduced an enterprise SATA SSD with QLC NAND this spring, and everyone else is working on QLC NAND as well. But for consumers, where the pricing advantages of QLC are going to be the most noticed, it is Intel who the first to market with a consumer SSD that uses QLC NAND flash memory. Today the company is taking the wraps off of their new Intel SSD 660p, an entry-level M.2 NVMe SSD with up to 2TB of QLC NAND.

Intel has reportedly cut off further development of consumer SATA drives, so naturally their first consumer QLC SSD is a member of their 6-series, the lowest tier of NVMe SSDs. The 660p comes as a replacement for the Intel SSD 600p, Intel's first M.2 NVMe SSD and one of the first consumer NVMe drives that aimed to be cheaper and slower than the premium high-end NVMe SSDs, through the use of TLC NAND at a time when NVMe SSDs were still primarily using MLC NAND. The purpose of the Intel 660p is to push prices down even further while still providing better performance than SATA SSDs or the 600p.

| Intel SSD 660p Specifications | |||||

| Capacity | 512 GB | 1 TB | 2 TB | ||

| Controller | Silicon Motion SM2263 | ||||

| NAND Flash | Intel 64L 1024Gb 3D QLC | ||||

| Form-Factor, Interface | single-sided M.2-2280, PCIe 3.0 x4, NVMe 1.3 | ||||

| DRAM | 256 MB DDR3 | ||||

| Sequential Read | up to 1800 MB/s | ||||

| Sequential Write (SLC cache) | up to 1800 MB/s | ||||

| Random Read (4kB) | up to 220k IOPS | ||||

| Random Write (4kB, SLC cache) | up to 220k IOPS | ||||

| Warranty | 5 years | ||||

| Write Endurance | 100 TB 0.1 DWPD |

200 TB 0.1 DWPD |

400 TB 0.1 DWPD |

||

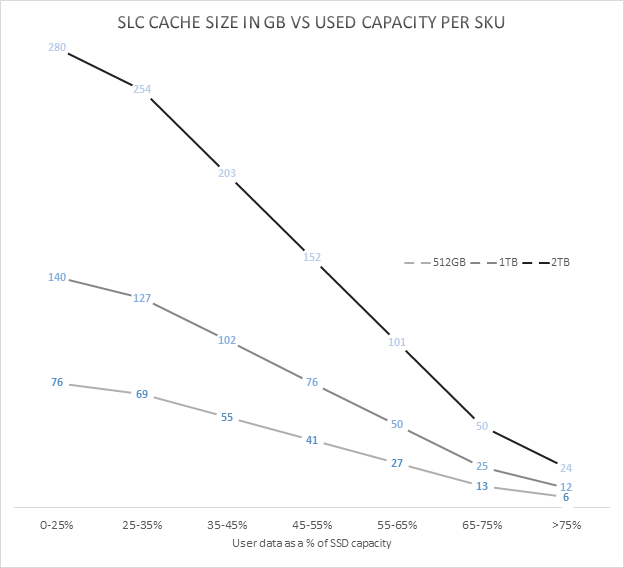

| SLC Write Cache | Minimum | 6 GB | 12 GB | 24 GB | |

| Maximum | 76 GB | 140 GB | 280 GB | ||

| MSRP | $99.99 (20¢/GB) | $199.99 (20¢/GB) | TBD | ||

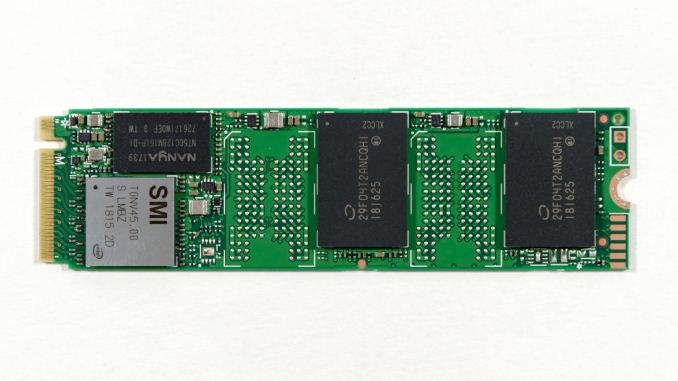

Looking under the hood, Intel's partnership with Silicon Motion for client and consumer SSDs continues with the use of the SM2263 NVMe SSD controller for the 660p. This is the smaller 4-channel sibling to the SM2262 and SM2262EN controllers that are doing very well in the more high-end parts of the SSD market. A 4-channel controller makes sense for a QLC drive, because the large 1Tb (128GB) per-die capacity of Intel's 64-layer 3D QLC NAND means it only takes a few chips to reach mainstream drive capacities.

The 660p lineup starts at 512GB (four QLC dies) and scales up to 2TB. All three capacities are single-sided M.2 2280 cards with a constant 256MB of DRAM. Mainstream SSDs typically use 1 GB of DRAM per 1TB of flash, so the 660p is rather short on DRAM even at the 512GB capacity. As a cost-oriented SSD it might make sense to use the DRAMless SM2263XT controller and the NVMe Host Memory Buffer feature, but that significantly complicates firmware development and error handling. The small size of the SM2263 controller allows Intel to fit all four NAND packages used by the 2TB model on one side of the PCB.

Intel doesn't break down performance specs for the 660p by drive capacity, and the read and write performance ratings are the same, thanks to the acceleration of SLC write caching. Intel doesn't provide any official spec for write performance after the SLC cache is filled, but we've measured about 100 MB/s on our 1TB sample. This steady-state sequential write speed will vary with drive capacity. Intel is offering a 5 year warranty on the drive and write endurance is about 0.1 drive writes per day, lower than the 0.3 DWPD typical of mainstream consumer SSDs, but something that should still adequate for most users.

Probably the most important aspect of a consumer QLC drive design is the behavior of the SLC cache. Consumer TLC drives universally treat a portion of their NAND flash memory as pseudo-SLC, using that higher-performing memory segment as a write cache. QLC SSDs are even more reliant on SLC caching because the performance of raw QLC NAND is even lower than that of TLC.

No SLC caching strategy can perfectly accelerate every workload and use case, and there are significant tradeoffs between different strategies. The Intel SSD 660p employs a variable-size SLC cache, and all data written goes first to the SLC cache before being compacted and folded into QLC blocks. This means that the steady-state 100MB/s sequential write speed we've measured is significantly below what the drive could deliver if the writes went directly to the QLC without the extra SLC to QLC copying step getting in the way. When the drive is mostly empty, up to about half of the available flash memory cells will be treated as SLC NAND. As the drive fills up, blocks will be converted to QLC usage, shrinking the size of the cache and making it more likely that a real-world use case could write enough to fill that cache.

Our current test suite cannot fully capture the dynamics of a variable-size SLC cache, and we haven't had the 660p in hand long enough to thoroughly test it at various states of fill. When the Intel SSD 660p is mostly empty and the SLC cache size is huge, many of our standard benchmarks end up testing primarily the performance of the SLC cache — and for reads in addition to writes, because in these conditions the 660p isn't very aggressive about moving data from SLC blocks to QLC. As a result, our synthetic benchmark tests have been run both with our standard methodology, and with a completely full drive so that the tests measure performance of the QLC memory (with an SLC cache that is too small to entirely contain any of our tests). The two sets of scores thus represent the two extremes of performance that the Intel SSD 660p can deliver. The full-drive results in this review represent a worst-case scenario that will almost never be encountered by real-world usage, because our tests give the drive limited idle time to flush the SLC cache but in the real world consumer workloads almost always give SSDs far more idle time than they need.

Intel's initial pricing for the 660p works out to just under 20¢/GB, putting it very close to the street prices of the cheapest current-generation TLC-based SSDs. The Intel SSD 660p goes on sale today, and is being showcased by Intel at Flash Memory Summit this week. In traveling to FMS, I left behind a testbed full of drives running extra benchmarks for this review. When I can catch a break from all the news and activities at FMS, I will be adding to this review.

The Competition

The Intel SSD 660p is positioned as a very cheap entry-level NVMe SSD, so our primary focus is on comparing it against other low-end NVMe drives and against SATA drives. As usual, the Crucial MX500 serves as our representative of mainstream SATA SSDs thanks to its consistently good pricing and solid all-around performance. The other low-end NVMe SSDs in this review are the 660p's predecessor Intel SSD 600p, the Phison E8-based Kingston A1000 and and the Toshiba RC100 DRAMless NVMe SSD that uses the Host Memory Buffer feature.

| AnandTech 2018 Consumer SSD Testbed | |

| CPU | Intel Xeon E3 1240 v5 |

| Motherboard | ASRock Fatal1ty E3V5 Performance Gaming/OC |

| Chipset | Intel C232 |

| Memory | 4x 8GB G.SKILL Ripjaws DDR4-2400 CL15 |

| Graphics | AMD Radeon HD 5450, 1920x1200@60Hz |

| Software | Windows 10 x64, version 1709 |

| Linux kernel version 4.14, fio version 3.6 | |

| Spectre/Meltdown microcode and OS patches current as of May 2018 | |

- Thanks to Intel for the Xeon E3 1240 v5 CPU

- Thanks to ASRock for the E3V5 Performance Gaming/OC

- Thanks to G.SKILL for the Ripjaws DDR4-2400 RAM

- Thanks to Corsair for the RM750 power supply, Carbide 200R case, and Hydro H60 CPU cooler

- Thanks to Quarch for the XLC Programmable Power Module and accessories

- Thanks to StarTech for providing a RK2236BKF 22U rack cabinet.

86 Comments

View All Comments

zodiacfml - Wednesday, August 8, 2018 - link

I think the limiting factor for reliability is the electronics/controller, not the NAND. You just lose drive space with a QLC much sooner with plenty of writes.romrunning - Wednesday, August 8, 2018 - link

Given that you can buy 1TB 2.5" HDD for $40-60 (maybe less for volume purchases), and even this QLC drive is still $0.20/GB, I think it's still going to be quite a while before notebook mfgs replace their "big" HDD with a QLC drive. After all, the first thing the consumer sees is "it's got lots of storage!"evilpaul666 - Wednesday, August 8, 2018 - link

Does the 660p series of drives work with the Intel CAS (Cache Acceleration Software)? I've used the trial version and it works about as well as Optane does for speeding up a mechanical HDD while being quite a lot larger.eddieobscurant - Wednesday, August 8, 2018 - link

Wow,this got a recommended award and the adata 8200 didn't. Another pro-intel marketing from anandtech. Waiting for biased threadripper 2 review.BurntMyBacon - Wednesday, August 8, 2018 - link

The performance of this SSD is quite bipolar. I'm not sure I'd be as generous with the award. Though, I think the decision to give out an award had more to do with the price of the drive and the probable performance for typical consumer workloads than some "pro-intel marketing" bias.danwat1234 - Wednesday, August 8, 2018 - link

The drive is only rated to write to each cell 200 times before it begins to wear out? Ewwww.azazel1024 - Wednesday, August 8, 2018 - link

For some consumer uses, yes 100MiB/sec constant write speed isn't terrible once the SLC cache is exhausted, but it'll probably be a no for me. Granted, SSD prices aren't where I want them to be yet to replace my HDDs for bulk storage. Getting close, but prices still need to come down by about a factor of 3 first.My use case is 2x1GbE between my desktop and my server and at some point sooner rather than later I'd like to go with 2.5GbE or better yet 5GbE. No, I don't run 4k video editing studio or anything like that, but yes I do occasionally throw 50GiB files across my network. Right now my network link is the bottleneck, though as my RAID0 arrays are filling up, it is getting to be disk bound (2x3TB Seagate 7200rpm drive arrays in both machines). And small files it definitely runs in to disk issues.

I'd like the network link to continue to be the limiting factor and not the drives. If I moved to a 2.5GbE link which can push around 270MiB/sec and I start lobbing large files, the drive steady state write limits are going to quickly be reached. And I really don't want to be running an SSD storage array in RAID. That is partly why I want to move to SSDs so I can run a storage pool and be confident that each individual SSD is sufficiently fast to at least saturate 2.5GbE (if I run 5GbE and the drives can't keep up, at least in an SLC cache saturated state, I am okay with that, but I'd like them to at least be able to run 250+ MiB/sec).

Also although rare, I've had to transfer a full back-up of my server or desktop to the other machine when I've managed to do something to kill the file copy (only happened twice over the last 3 years, but it HAS happened. Also why I keep a cold back-up that is updated every month or two on an external HDD). When you are transferring 3TiB or so of data, being limited to 100MiB/sec would really suck. At least right now when that happens I can push an average of 200MiB/sec (accounting for some of it being smaller files which are getting pushed at more like 80-140MiB/sec rather than the 235MiB/sec of large files).

That is a difference from close to 8:30 compared to about 4:15. Ideally I'd be looking at more like 3:30 for 3TiB.

But, then again, looking at price movement, unless I win the lottery, SSD prices are probably going to take at least 4 or more likely 5-6 years before I can drop my HDD array and just replace it with SSDs. Heck, odds are excellent I'll end up replacing my HDD array with a set of even faster 4 or 6TiB HDDs before SSDs are closer enough in price (closer enough to me is paying $1000 or less for 12TB of SSD storage).

That is keeping in mind that with HDDs I'd likely want utilized capacity under 75% and ideally under 67% to keep from utilizing those inner tracks and slowing way down. With SSDs (ignoring the SLC write cache size reductions), write penalties seem to be much less. Or at least the performance (for TLC and MLC) is so much higher than HDDs to start with, that it still remains high enough not to be a serious issue for me.

So an SSD storage pool could probably be up around 80-90% utilized and be okay, where as a HDD array is going to want to be no more than 67-75% utilized. And also in my use case, it should be easy enough to simply slap in another SSD to increase the pool size, with HDDs I'd need to chuck the entire array and get new sets of matched drives.

iwod - Wednesday, August 8, 2018 - link

On Mac, two weeks of normal usage has gotten 1TB of written data. And it does 10-15GB on average per day.100TB endurance is nothing.......

abufrejoval - Wednesday, August 8, 2018 - link

I wonder if underneath the algorithm has already changed to do what I’d call the ‘smart’ thing: Essentially QLC encoding is a way of compression (brings back old memories about “Stacker”) data 4:1 at the cost of write bandwidth.So unless you run out of free space, you first let all data be written in fast SLC mode and then start compressing things into QLC as a background activity. As long as the input isn’t constantly saturated, the compression should reclaim enough SLC mode blocks faster on average after compression than they are filled with new data. The bigger the overall capacity and remaining cache, the longer the burst it can sustain. Of course, once the SSD is completely filled the cache will be whatever they put into the spare area and updates will dwindle down to the ‘native’ QLC write rate of 100MB/s.

In a way this is the perfect storage for stuff like Steam games: Those tend to be hundreds of gigabytes these days, they are very sensitive to random reads (perhaps because the developers don’t know how to tune their data) but their maximum change rate is actually the capacity of your download bandwidth (wish mine was 100MB/s).

But it’s also great for data warehouse databases or quite simply data that is read-mostly, but likes high bandwidth and better latency than spinning disks.

The problem that I see, though, is that the compression pass needs power. So this doesn’t play well with mobile devices that you shut off immediately after slurping massive amounts of data. Worst case would be a backup SSD where you write and unplug.

The specific problem I see for Anandtech and technical writers is that you’re no longer comparing hardware but complex software. And Emil Post proved in 1946, that it’s generally impossible.

And with an MRAM buffer (those other articles) you could even avoid writing things at SLC first, as long as the write bursts do not overflow the buffer and QLC encoding empties it faster on average that it is filled. Should a burst overflow it, it could switch to SLC temporarily.

I think I like it…

And I think I would like it even better, if you could switch the caching and writing strategy at the OS or even application level. I don’t want to have to decide between buying a 2TB QLC, 1TB TLC, a 500GB MLC or 250GB SLC and then find out I need a little more here and a little less there. I have knowledge at the application (usage level), how long-lived my data will be and how it should best be treated: Let’s just use it, because the hardware internally is flexible enough to support at least SLC, TLC and QLC.

That would also make it easier to control the QLC rewrite or compression activity in mobile or portable form factors.

ikjadoon - Thursday, August 9, 2018 - link

Billy, thank you!I posted a reddit comment a long time ago about separating SSD performance by storage size! I might be behind, but this is the first I’ve seen of it. It’s, to me, a much more reliable graph for purchases.

A big shout out. 💪👌