Enterprise SATA SSDs: Can Budget 2020 beat Top Line 2017?

by Billy Tallis on February 4, 2020 11:00 AM ESTMixed Random I/O Performance

Real-world storage workloads usually aren't pure reads or writes but a mix of both. It is completely impractical to test and graph the full range of possible mixed I/O workloads—varying the proportion of reads vs writes, sequential vs random and differing block sizes leads to far too many configurations. Instead, we're going to focus on just a few scenarios that are most commonly referred to by vendors, when they provide a mixed I/O performance specification at all. We tested a range of 4kB random read/write mixes at queue depth 32, the maximum supported by SATA SSDs. This gives us a good picture of the maximum throughput these drives can sustain for mixed random I/O, but in many cases the queue depth will be far higher than necessary, so we can't draw meaningful conclusions about latency from this test. This test uses 8 threads each running at QD4. This spreads the work over many CPU cores, and for NVMe drives it also spreads the I/O across the drive's several queues.

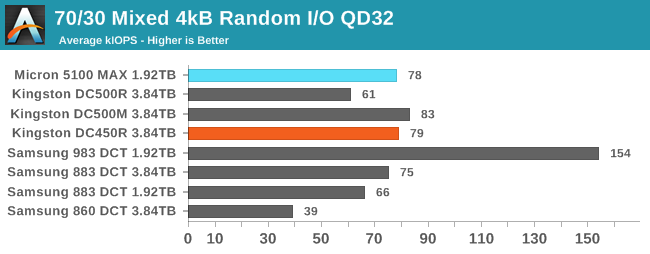

The full range of read/write mixes is graphed below, but we'll primarily focus on the 70% read, 30% write case that is a fairly common stand-in for moderately read-heavy mixed workloads.

With a 70% read/30% write mix, the low-end SATA drives are able to perform far better than they can for a pure random write test. The Kingston DC450R seems to benefit the most, and it's staying even further above its steady-state write speed than the DC500R does. The Micron 5100 MAX is also performing a bit above expectations, since its spec sheet calls for just 70k IOPS. The end result is that there's no clear performance winner among these SATA drives, and the latest-and-greatest models aren't necessary to get good throughput on this workload.

|

|||||||||

| Power Efficiency in MB/s/W | Average Power in W | ||||||||

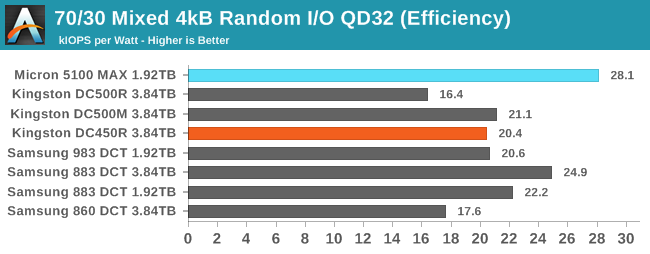

The power efficiency scores are a mixed bag. The Micron 5100 MAX comes out on top, followed by the Samsung 883 DCT. While the Kingston drives draw more power than the other SATA drives on this workload, they generally have enough performance that their efficiency scores aren't too bad.

|

|||||||||

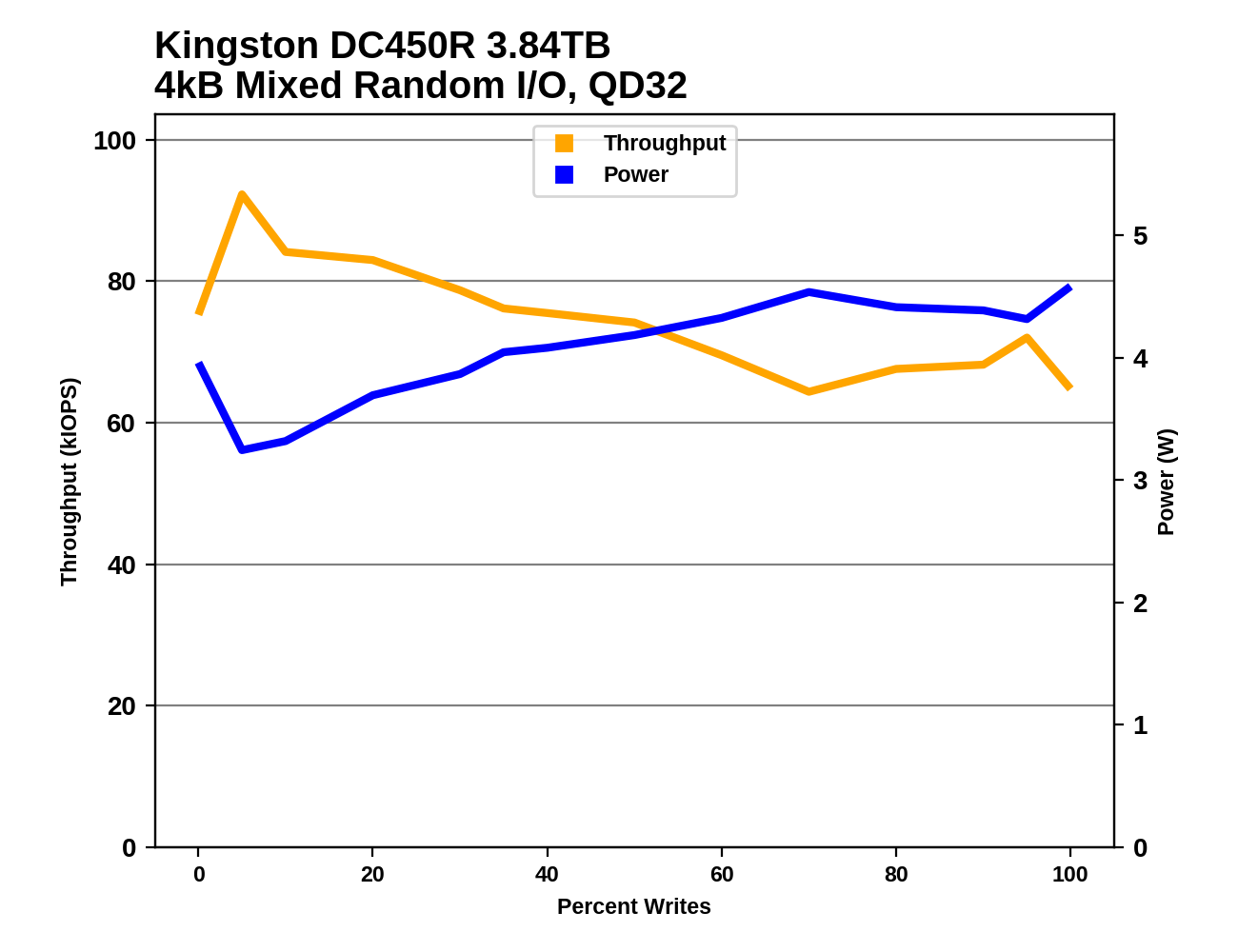

Looking at the entire range of mixes, we see the Samsung drives showing fairly ordinary steep declines in performance as the workload becomes more write-heavy. The performance curve from the Micron 5100 MAX also looks fairly normal, given that it can maintain a very high write throughput and thus shouldn't bottom out anywhere near as low as the other drives. The Kingston drives are another story. Even though this mixed random IO test was run immediately after the random write tests, these drives don't appear to have remained in a steady-state of being saturated with writes. The DC500R may be closest, with relatively low performance except for the pure-read phase of the test. The DC450R is slower and less consistent than the DC500M, but still definitely punching above its weight here.

Aerospike Certification Tool

Aerospike is a high-performance NoSQL database designed for use with solid state storage. The developers of Aerospike provide the Aerospike Certification Tool (ACT), a benchmark that emulates the typical storage workload generated by the Aerospike database. This workload consists of a mix of large-block 128kB reads and writes, and small 1.5kB reads. When the ACT was initially released back in the early days of SATA SSDs, the baseline workload was defined to consist of 2000 reads per second and 1000 writes per second. A drive is considered to pass the test if it meets the following latency criteria:

- fewer than 5% of transactions exceed 1ms

- fewer than 1% of transactions exceed 8ms

- fewer than 0.1% of transactions exceed 64ms

Drives can be scored based on the highest throughput they can sustain while satisfying the latency QoS requirements. Scores are normalized relative to the baseline 1x workload, so a score of 50 indicates 100,000 reads per second and 50,000 writes per second. Since this test uses fixed IO rates, the queue depths experienced by each drive will depend on their latency, and can fluctuate during the test run if the drive slows down temporarily for a garbage collection cycle. The test will give up early if it detects the queue depths growing excessively, or if the large block IO threads can't keep up with the random reads.

We used the default settings for queue and thread counts and did not manually constrain the benchmark to a single NUMA node, so this test produced a total of 64 threads scheduled across all 72 virtual (36 physical) cores.

The usual runtime for ACT is 24 hours, which makes determining a drive's throughput limit a long process. For fast NVMe SSDs, this is far longer than necessary for drives to reach steady-state. In order to find the maximum rate at which a drive can pass the test, we start at an unsustainably high rate (at least 150x) and incrementally reduce the rate until the test can run for a full hour, and the decrease the rate further if necessary to get the drive under the latency limits.

We have updated to version 5.2 of ACT, and these numbers are not exactly equivalent to our previous reviews.

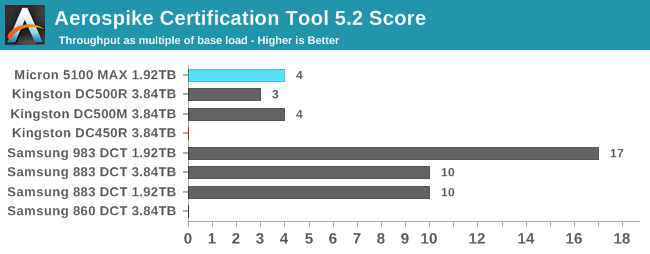

Despite its generous overprovisioning, the Micron 5100 MAX turns in a much lower ACT score than the Samsung 883 DCT. Even though the 5100 MAX can sustain high random write throughput (by SATA standards), its poor QoS prevents it from scoring well here. The Kingston DC450R, like the similarly low-end 860 DCT, cannot meet the test's QoS requirements even at the 1x workload rate.

|

|||||||||

| Power Efficiency | Average Power in W | ||||||||

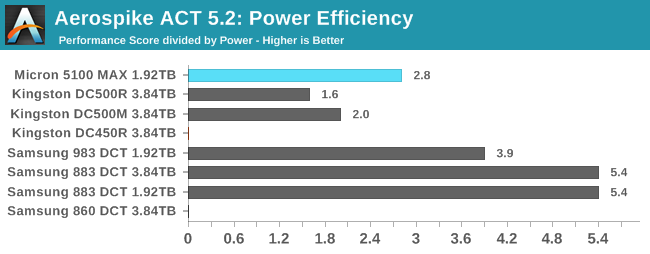

Most of the SATA drives hover at or just below 2W during the ACT test, but the Micron 5100 MAX averages 1.4W. That allows it to earn an efficiency score that is clearly better than the Kingston DC500s, but still only half that of the Samsung 883 DCT.

20 Comments

View All Comments

romrunning - Tuesday, February 4, 2020 - link

I understand the reason (batch of excess drives leftover from OEM) for using the Micro 5100 MAX, but for "enterprise" SSD use, I really would have liked to see Intel's DC-series (DC = Data Center) of enterprise SSDs compared.DanNeely - Tuesday, February 4, 2020 - link

This is a new benchmark suite, which means all the drives Billy has will need to be re-ran through it to get fresh numbers which is why the comparison selection is so limited. More drives will come in as they're retested; but looking at the bench SSD 2018 data it looks like the only enterprise SSDs Intel has sampled are Optanes.Billy Tallis - Tuesday, February 4, 2020 - link

This review was specifically to get the SATA drives out of the way. I also have 9 new enterprise NVMe drives to be included in one or two upcoming reviews, and that's where I'll include the fresh results for drives I've already reviewed like the Intel P4510, Optane P4800X and Memblaze PBlaze5.I started benchmarking drives with the new test suite at the end of November, and kept the testbed busy around the clock until the day before I left for CES.

romrunning - Wednesday, February 5, 2020 - link

I appreciate getting more enterprise storage reviews! Not enough of them, especially for those times when you might have some leeway on which storage to choose.pandemonium - Wednesday, February 5, 2020 - link

Agreed. I'm curious how my old pseudo enterprise Intel 750 would compare here.Scipio Africanus - Tuesday, February 4, 2020 - link

I love those old stock enterprise drives. Before I went NVME just a few months ago, my primary drive was a Samsung SM863 960gb which was a pumped up high endrance 850 Pro.shodanshok - Wednesday, February 5, 2020 - link

Hi Billy, thank for the review. I always appreciate similar article.That said, this review really fails to take into account the true difference between the listed enterprise disks, and this is due to a general misunderstanding on what powerloss protection does and why it is important.

In the introduction, you state that powerloss protection is a data integrity features. While nominally true, the real added value of powerloss protection is much higher performance for synchronized write workloads (ie: SQL databases, filesystem metadata update, virtual machines, etc). Even disks *without* powerloss protection can give perfect data integrity: this is achieved with flush/fsync (on application/OS side) and SATA write barrier/FUAs. Applications which do not use fsync will be unreliable even on drive featuring powerloss protection, as any write will be cached in the OS cache for a relatively long time (~1s on Windows, ~30s on Linux), unless opening the file in direct/unbuffered mode.

Problem is, synchronous writes are *really* expensive and slow, even for flash-backed drive. I have the OEM version of a fast M.2 Samsung 960 EVO NVMe drive and in 4k sync writes it show only ~300 IOPs. For unsynched, direct writes (ie: bypassing OS cache but using its internal DRAM buffer), it has 1000x the IOPs. To be fast, flash really needs write coalescing. Avoiding that (via SATA flushes) really wreack havok on the drive performance.

Obviously, not all writes need to be synchronous: most use cases can tolerate a very low data loss window. However, for application were data integrity and durability are paramount (as SQL databases), sync writes are absolutely necessary. In these cases, powerloss protected SSD will have performance one (or more) order of magnitude higher than consumer disks: having a non-volatile DRAM cache (thank to power capacitors), they will simply *ignore* SATA flushes. This enable write aggregation/coalescing and the relative very high performance advantage.

In short: if faced with a SQL or VM workload, the Kingston DC450R will fare very poorly, while the Micron 5100 MAX (or even the DC500R) will be much faster. This is fine: the DC450R is a read-intensive drive, not intended for SQL workloads. However, this review (which put it against powerloss protected drives) fails to account for that key difference.

mgiammarco - Thursday, February 6, 2020 - link

I agree perfectly finally someone that explains the real importance of power loss protection. Frankly speaking an "enterprise ssd" without plp and with low write endurance is really should be called "enterprise"? Which is the difference with a standard sdd?AntonErtl - Wednesday, February 5, 2020 - link

There is a difference between data integrity and persistency, but power-loss protection is needed for either.Data integrity is when your data is not corrupted if the system fails, e.g., from a poweroff; this needs the software (in particular the data base system and/or file system) to request the writes in the right order), but it also needs the hardware to not reorder the writes, at least as far as the persistent state is concerned. Drives without power-loss protection tend not to give guarantees in this area; some guarantee that they will not damage data written long ago (which other drives may do when they consilidate the still-living data in an erase block), but that's not enough for data integrity.

Persistency is when the data is at least as up-to-date as when your last request for persistency (e.g., fsync) was completed. That's often needed in server applications; e.g., when a customer books something, the data should be in persistent storage before the server sends out the booking data to the customer.

shodanshok - Wednesday, February 5, 2020 - link

fsync(), write barrier and SATA flushes/FUAs put strong guarantee on data integrity and durability even *without* powerloss protected drive cache. So, even a "serious" database running on any reliable disk (ie: one not lying about flushes) will be 100% functional/safe; however, performance will tank.A drive with powerloss protected cache will give much higher performance but, if the application is correctly using fsync() and the OS supports write barrier, no added integrity/durability capability.

Regarding write reordering: write barrier explicitly avoid that.