Fable Legends Early Preview: DirectX 12 Benchmark Analysis

by Ryan Smith, Ian Cutress & Daniel Williams on September 24, 2015 9:00 AM ESTGraphics Performance Comparison

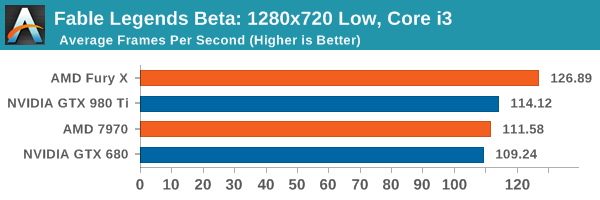

With the background and context of the benchmark covered, we now dig into the data and see what we have to look forward to with DirectX 12 game performance. This benchmark has preconfigured batch files that will launch the utility at either 3840x2160 (4K) with settings at ultra, 1920x1080 (1080p) also on ultra, or 1280x720 (720p) with low settings more suited for integrated graphics environments.

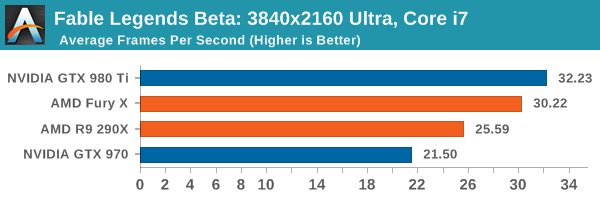

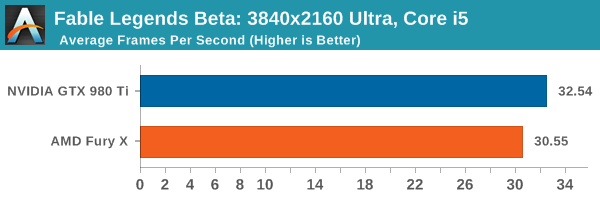

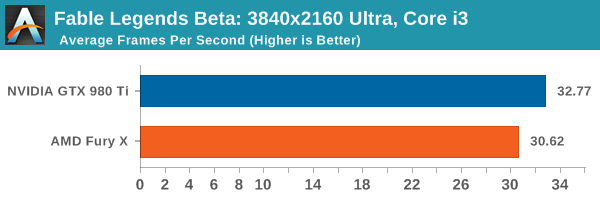

When dealing with 3840x2160 resolution, the GTX 980 Ti has a single digit percentage lead over the AMD Fury X, but both are above the bare minimum of 30 FPS no matter what the CPU.

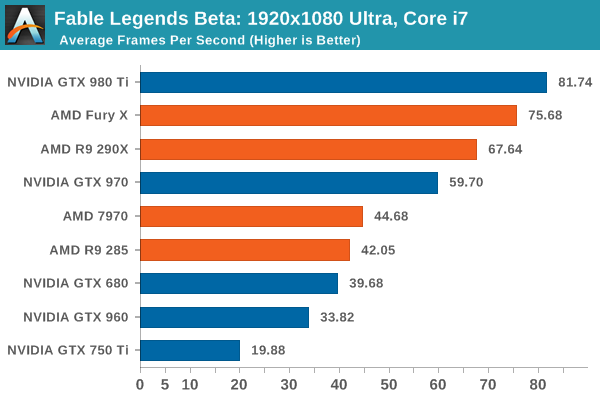

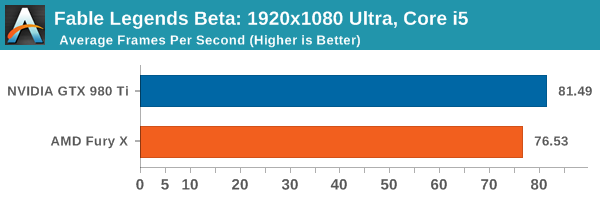

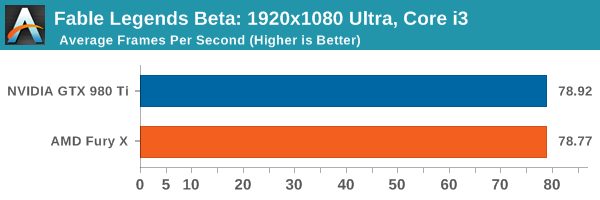

When dealing with the i5 and i7 at 1920x1080 ultra settings, the GTX 980 Ti still has that single digit percentage lead, but at Core i3 levels of CPU power the difference is next to zero, suggesting we are CPU limited even though the frame difference from i3 to i5 is minimal. If we look at the range of cards under the Core i7 at this point, the interesting thing here is that the GTX 970 just about hits that 60 FPS mark, while some of the older generation cards (7970/GTX 680) would require compromises in the settings to push it over the 60 FPS barrier at this resolution. The GTX 750 Ti doesn’t come anywhere close, suggesting that this game (under these settings) is targeting upper mainstream to lower high end cards. It would be interesting to see if there is an overriding game setting that ends up crippling this level of GPU.

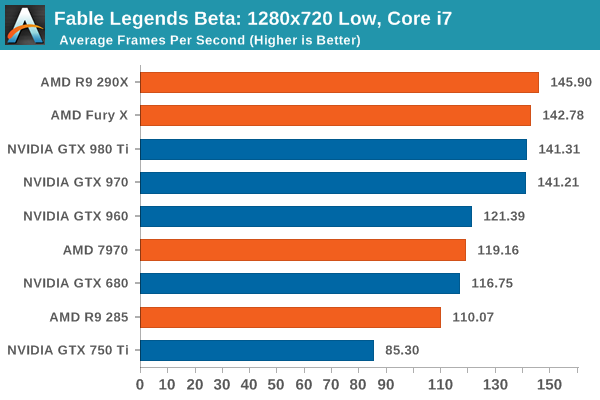

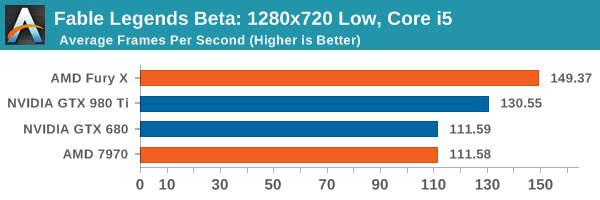

At the 720p low settings, the Core i7 pushes everything above 60 FPS, but you need at least an AMD 7970/GTX 960 to start going for 120 FPS if only for high refresh rate panels. We are likely being held back by CPU performance as illustrated by the GTX 970 and GTX 980 Ti being practically tied and the R9 290X stepping ahead of the pack. This makes it interesting when we consider integrated graphics, which we might test for a later article. It is worth noting that at the low resolution, the R9 290X and Fury X pull out a minor lead over the NVIDIA cards. The Fury X expands this lead with the i5 and i3 configurations, just rolling over to the double digit percentage gains.

141 Comments

View All Comments

piiman - Saturday, September 26, 2015 - link

"Yes, but when the goal is to show improvements in rendering performance"I'm completely confused with this "comparison"

How does this story even remotely show how will Dx12 works compared to Dx11? All they did was a Dx12 VIDEO card comparison? It tells us NOTHING in regard to how much faster Dx12 is compared to 11.

inighthawki - Saturday, September 26, 2015 - link

I guess what I mean is the purpose of a graphics benchmark is not to show real world game performance, it is to show the performance of the graphics API. This this case, the goal is trying to show that D3D12 works well. Throwing someone into a 64 player match of battlefield 4 to test a graphics benchmark defeats the purpose because you are introducing a bunch of overhead completely unrelated to graphics.figus77 - Monday, September 28, 2015 - link

You are wrong, many dx12 implementation will help on very chaotic situation with many pg and big use of IA, this benchmark is usefull like a 3dmark... just look at the images and say is a nice graphics (still Witcher3 in DX11 is far better for me)inighthawki - Tuesday, September 29, 2015 - link

I think you missed the point - I did not say it would not help, I just said that throwing on tons of extra overhead does not isolate the overhead improvements on the graphics runtime. You would get fairly unreliable results due to the massive variation caused by actual gameplay. When you do a benchmark of a specific thing - e.g. a graphics benchmark, which is what this is, then you want to perform as little non-graphics work as possible.mattevansc3 - Thursday, September 24, 2015 - link

Yes, the game built on AMD technology (Mantle) before being ported to DX12, sponsored by AMD, made in partnership with AMD and received development support from AMD is a more representative benchmark than a 3rd party game built on a hardware agnostic engine.YukaKun - Thursday, September 24, 2015 - link

Yeah, cause Unreal it's very neutral.Remember the "TWIMTBP" from 1999 to 2010 in every UE game? Don't think UE4 is a clean slate coding wise for AMD and nVidia. They will still favor nVidia re-using old code paths for them, so I'm pretty sure even if the guys developing Fable are neutral (or try to), UE underneath is not.

Cheers!

BillyONeal - Thursday, September 24, 2015 - link

That's because AMD's developer outreach was terrible at the time, not because Unreal did anything specific.Kutark - Monday, September 28, 2015 - link

Yes, but you have to remember, Nvidia is Satan, AMD is Jesus. Keep that in mind when you read comments like that and all will make senseStuka87 - Thursday, September 24, 2015 - link

nVidia is a primary sponsor of the Unreal Engine.RussianSensation - Thursday, September 24, 2015 - link

UE4 is not a brand agnostic engine. In fact, every benchmark you see on UE4 has GTX970 beating 290X.I have summarized the recent UE4 games where 970 beats 290X easily:

http://forums.anandtech.com/showpost.php?p=3772288...

In Fable Legends, a UE4 DX12 benchmark, a 925mhz HD7970 crushes the GTX960 by 32%, while an R9 290X beats GTX970 by 13%. Those are not normal results for UE4 games that have favoured NV's Maxwell architecture.

Furthermore, we are seeing AMD cards perform exceptionally well at lower resolutions, most likely because DX12 helped resolve their DX11 API draw-call bottleneck. This is a huge boon for GCN moving forward if more DX12 games come out.

Looking at other websites, a $280 R9 390 is on the heels of a $450 GTX980.

http://techreport.com/review/29090/fable-legends-d...

So really besides 980Ti (TechReport uses a heavily factory pre-overclocked Asus Strix 980TI that boosts to 1380mhz out of the box), the entire stack of NV's cards from $160-500 loses badly to GCN in terms of expected price/performance.

We should wait for the full game's release and give NV/AMD time to upgrade their drivers but thus far the performance in Ashes and Fable Legends is looking extremely strong for AMD's cards.