Cerebras Unveils Wafer Scale Engine Two (WSE2): 2.6 Trillion Transistors, 100% Yield

by Dr. Ian Cutress on April 20, 2021 2:00 PM EST

The last few years has seen a glut of processors enter the market with the sole purpose of accelerating artificial intelligence and machine learning workloads. Due to the different types of machine learning algorithms possible, these processors are often focused on a few key areas, but one thing limits them all – how big you can make the processor. Two years ago Cerebras unveiled a revolution in silicon design: a processor as big as your head, using as much area on a 12-inch wafer as a rectangular design would allow, built on 16nm, focused on both AI as well as HPC workloads. Today the company is launching its second generation product, built on TSMC 7nm, with more than double the cores and more than double of everything.

Second Generation Wafer Scale Engine

The new processor from Cerebras builds on the first by moving to TSMC’s N7 process. This allows the logic to scale down, as well as to some extent the SRAMs, and now the new chip has 850,000 AI cores on board. Basically almost everything about the new chip is over 2x:

| Cerebras Wafer Scale | |||

| AnandTech | Wafer Scale Engine Gen1 |

Wafer Scale Engine Gen2 |

Increase |

| AI Cores | 400,000 | 850,000 | 2.13x |

| Manufacturing | TSMC 16nm | TSMC 7nm | - |

| Launch Date | August 2019 | Q3 2021 | - |

| Die Size | 46225 mm2 | 46225 mm2 | - |

| Transistors | 1200 billion | 2600 billion | 2.17x |

| (Density) | 25.96 mTr/mm2 | 56.246 mTr/mm2 | 2.17x |

| On-board SRAM | 18 GB | 40 GB | 2.22x |

| Memory Bandwidth | 9 PB/s | 20 PB/s | 2.22x |

| Fabric Bandwidth | 100 Pb/s | 220 Pb/s | 2.22x |

| Cost | $2 million+ | arm+leg | ‽ |

As with the original processor, known as the Wafer Scale Engine (WSE-1), the new WSE-2 features hundreds of thousands of AI cores across a massive 46225 mm2 of silicon. In that space, Cerebras has enabled 2.6 trillion transistors for 850,000 cores - by comparison, the second biggest AI CPU on the market is ~826 mm2, with 0.054 trillion transistors. Cerebras also cites 1000x more onboard memory, with 40 GB of SRAM, compared to 40 MB on the Ampere A100.

Me with Wafer Scale Gen1 - looks the same, but with less than half the cores.

The cores are connected with a 2D Mesh with FMAC datapaths. Cerebras achieves 100% yield by designing a system in which any manufacturing defect can be bypassed – initially Cerebras had 1.5% extra cores to allow for defects, but we’ve since been told this was way too much as TSMC's process is so mature. Cerebras’ goal with WSE is to provide a single platform, designed through innovative patents, that allowed for bigger processors useful in AI calculations but has also been extended into a wider array of HPC workloads.

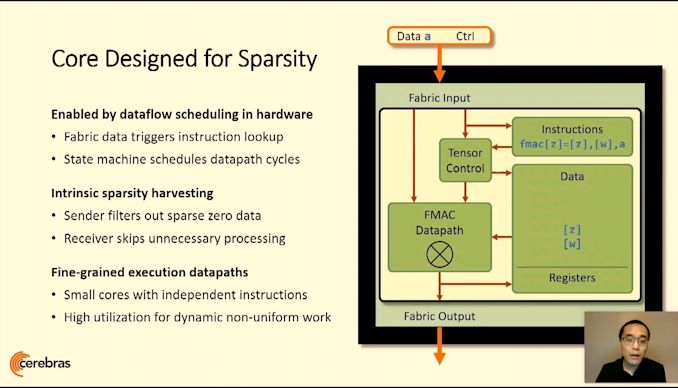

Building on First Gen WSE

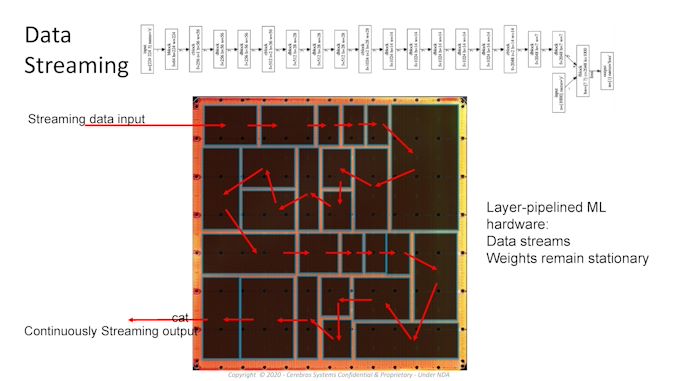

A key to the design is the custom graph compiler, that takes pyTorch or TensorFlow and maps each layer to a physical part of the chip, allowing for asynchronous compute as the data flows through. Having such a large processor means the data never has to go off-die and wait in memory, wasting power, and can continually be moved onto the next stage of the calculation in a pipelined fashion. The compiler and processor are also designed with sparsity in mind, allowing high utilization regardless of batch size, or can enable parameter search algorithms to run simultaneously.

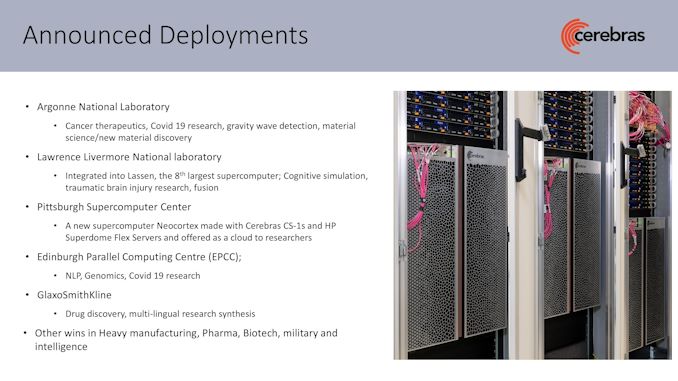

For Cerebras’ first generation WSE is sold as a complete system called CS-1, and the company has several dozen customers with deployed systems up and running, including a number of research laboratories, pharmaceutical companies, biotechnology research, military, and the oil and gas industries. Lawrence Livermore has a CS-1 paired to its 23 PFLOP ‘Lassen’ Supercomputer. Pittsburgh Supercomputer Center purchased two systems with a $5m grant, and these systems are attached to their Neocortex supercomputer, allowing for simultaneous AI and enhanced compute.

Products and Partnerships

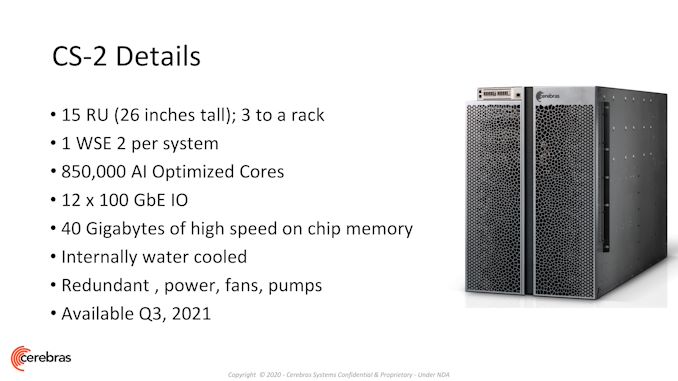

Cerebras sells complete CS-1 systems today as a 15U box that contains one WSE-1 along with 12x100 GbE, twelve 4 kW power supplies (6 redundant, peak power about 23 kW), and deployments at some institutions are paired with HPE’s SuperDome Flex. The new CS-2 system shares this same configuration, albeit with more than double the cores and double the on-board memory, but still within the same power. Compared to other platforms, these processors are arranged vertically inside the 15U design in order to enable ease of access as well as built-in liquid cooling across such a large processor. It should also be noted that those front doors are machined from a single piece of aluminium.

The uniqueness of Cerebras’ design is being able to go beyond the physical manufacturing limits normally presented in manufacturing, known as the reticle limit. Processors are designed with this limit as the maximum size of a chip, as connecting two areas with a cross-reticle connection is difficult. This is part of the secret sauce that Cerebras brings to the table, and the company remains the only one offering a processor on this scale – the same patents that Cerebras developed and were awarded to build these large chips are still in play here, and the second gen WSE will be built into CS-2 systems with a similar design to CS-1 in terms of connectivity and visuals.

The same compiler and software packages with updates enable any customer that has been trialling AI workloads with the first system to use the second at the point at which they deploy one. Cerebras has been working on higher-level implementations to enable customers with standardized TensorFlow and PyTorch models very quick assimilation of their existing GPU code by adding three lines of code and using Cerebras’ graph compiler. The compiler then divides the whole 850,000 cores into segments of each layer that allow for data flow in a pipelined fashion without stalls. The silicon can also be used for multiple networks simultaneously for parameter search.

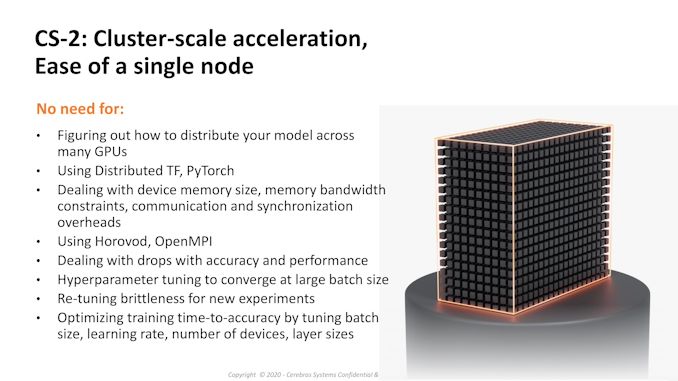

Cerebras states that with having such a large single chip solution means that the barrier to distributed training methods across 100s of AI chips is now so much further away that this excess complication is not needed in most scenarios – to that, we’re seeing CS-1 deployments of single systems attached to supercomputers. However, Cerebras is keen to point out that two CS-2 systems will deliver 1.7 million AI cores in a standard 42U rack, or three systems for 2.55 million in a larger 46U rack (assuming there’s sufficient power for all at once!), replacing a dozen racks of alternative compute hardware. At Hot Chips 2020, Chief Hardware Architect Sean Lie stated that one of Cerebras' key benefits to customers was the ability to enable workload simplification that previously required racks of GPU/TPU but instead can run on a single WSE in a computationally relevant fashion.

As a company, Cerebras has ~300 staff across Toronto, San Diego, Tokyo, and San Francisco. They have dozens of customers already with CS-1 deployed and a number more already trialling CS-2 remotely as they bring up the commercial systems. Beyond AI, Cerebras is getting a lot of interest from typical commercial high performance compute markets, such as oil-and-gas and genomics, due to the flexibility of the chip is enabling fluid dynamics and other compute simulations. Deployments of CS-2 will occur later this year in Q3, and the price has risen from ~$2-3 million to ‘several’ million.

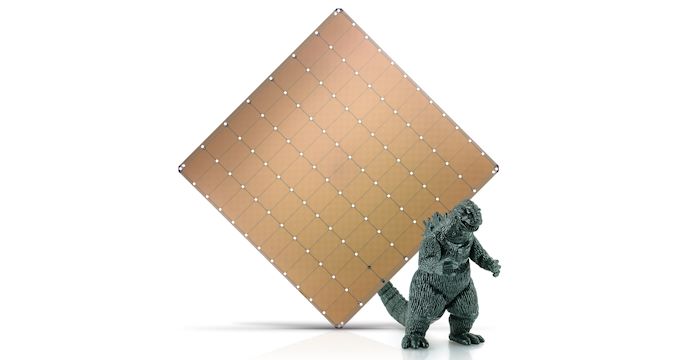

With Godzilla for a size reference

Related Reading

- Cerebras Wafer Scale Engine News: DoE Supercomputer Gets 400,000 AI Cores

- 342 Transistors for Every Person In the World: Cerebras 2nd Gen Wafer Scale Engine Teased

- Cerebras’ Wafer Scale Engine Scores a Sale: $5m Buys Two for PSC

- Hot Chips 2020 Live Blog: Cerebras WSE Programming (3:00pm PT)

- Hot Chips 2019 Live Blog: Cerebras' 1.2 Trillion Transistor Deep Learning Processor

136 Comments

View All Comments

GeoffreyA - Friday, April 23, 2021 - link

Oppressive indeed. I suppose we'll just have to send Sarah Connor and Arnie in there to do their stuff. Ironically, Cerebras doesn't sound too far from "Cyberdyne."Part of me can't wait for the advent of machine consciousness but another part worries about the dangers. I reckon it'll never be like Terminator or Matrix paints, but rather they might excel and beat us across the board, rendering us "obselete." We'll really be the stone-age human brain. Eniac competing with Epyc.

mode_13h - Friday, April 23, 2021 - link

In the near term, we have a lot more to fear from AI being used by the powerful to optimize, manipulate, and oppress societies for their own gain.Taking the extreme case of that, I see certain countries treating AI as the key component of "authoritarianism in a box", and exporting it to large parts of the developing (and developed!) world.

GeoffreyA - Friday, April 23, 2021 - link

You're right. As it is, we're being manipulated left, right, and centre. As the power grows, so will the misuse of it. The trick is, not letting the oppressed know they're in chains and giving them the illusion of choice. No stun batons or Civil Protection needed. Rather, a more up-to-date, Brave New World style. At the forefront of such progress will be those impeccable companies who care so much about us, Apple, Google, Facebook, and co.mode_13h - Saturday, April 24, 2021 - link

Uh, I was thinking more like how China rolls, as far as the "authoritarianism in a box" model. China is perfecting the most extreme form of its totalitarian control on the Uigher population, as we speak. It's like straight out of 1984, for real.GeoffreyA - Saturday, April 24, 2021 - link

That's quite eye-opening. I remember, at the start of Covid last year, I was reading a bit about the Uighers and only saw the re-education part, and forgot about them. Took a look now and am shaking my head. I don't know what to say.mode_13h - Saturday, April 24, 2021 - link

Yeah, I didn't think they'd ever do anything worse than Tiananmen, but I guess it's easier to do awful things in the hinterland, to people who don't look like you or share your same culture. The saddest part is that there seems to be nothing anyone can really do to stop it. In the long term, having diverse supply-chains will be key, though China has done a lot to seize the world's natural resources, over the past decade.Now, here's the scary part: if you're trying to sit atop an unstable country, you and your officials can go to a training program in China (pre-pandemic), where they will teach you "governing principles and practices". No doubt, that's part sales-pitch for various surveillance products and systems. The other thing China gets out of it is to avoid having a new government come in that won't honor their country's debts, incurred under programs like "belt and road".

GeoffreyA - Sunday, April 25, 2021 - link

As the 20th century showed, mankind is capable of terrible things, even when the world is at its most civilised.Touching on the second part, if any country is suited to teaching others how to wield the rod, it's China. I've noticed it, too, they seem eager to help, but the question is, does one wish to take that help? What is the cost? We all know that taking help puts one in the helper's debt, especially if the latter has some motive and isn't doing it purely out of love.

Even my country, South Africa, has particularly close ties to China (they're all part of BRICS), and we sometimes wonder, or rather worry, how much influence China is trying to gain. How many ideas they're putting in our government's head, and how many subtle forms of control they're gaining, from an economic point of view.

mode_13h - Sunday, April 25, 2021 - link

> we sometimes wonder, or rather worry, how much influence China is trying to gain.I'd say look at the big infrastructure & natural resource projects and see who's funding them. Better yet, if you can find the terms of the deal, that would be most enlightening.

The softer form of power one can wield, fueled by AI, is tilting of elections through things like targeted advertising and engineering social unrest. We know that people tend to vote a certain way, when they're scared. There are messages that can be targeted to those likely to support your opponent that create a sense of apathy or hopelessness to have them stay home, on election day. AI can be used to figure out just the right messages to send each person. I wish the online platforms would all ban targeted political advertising.

GeoffreyA - Monday, April 26, 2021 - link

There have been a lot of loans. Also, in 2018, a heap of money to Eskom, our struggling power utility, who is like a patient on life support, thanks to corruption, mismanagement, and aging infrastructure; we have "load shedding" all the time, what the power cuts are called. Anyhow, I get the feeling our government has been keeping its distance from China of late, though who knows what's going on behind the scenes.With regard to AI and voting/unrest, that's horribly plausible and alarming. Those things can do this far more effectively than any quack human could on YouTube or Twitter. Already, I can picture a dystopia, Blade Runner like future, with all those ads on the sides of buildings, manipulating us to buy this, "cause you're worth it," or vote for Party X, "because they'll pave a brighter future for Little Timmy, together."

Threska - Sunday, April 25, 2021 - link

Yes, well that's the interesting thing about technology. It's not the exclusive domain of any oppressors. Those who want freedom can use it as well.