Apple Announces The Apple Silicon M1: Ditching x86 - What to Expect, Based on A14

by Andrei Frumusanu on November 10, 2020 3:00 PM EST- Posted in

- Apple

- Apple A14

- Apple Silicon

- Apple M1

Today, Apple has unveiled their brand-new MacBook line-up. This isn’t an ordinary release – if anything, the move that Apple is making today is something that hasn’t happened in 15 years: The start of a CPU architecture transition across their whole consumer Mac line-up.

Thanks to the company’s vertical integration across hardware and software, this is a monumental change that nobody but Apple can so swiftly usher in. The last time Apple ventured into such an undertaking in 2006, the company had ditched IBM’s PowerPC ISA and processors in favor of Intel x86 designs. Today, Intel is being ditched in favor of the company’s own in-house processors and CPU microarchitectures, built upon the Arm ISA.

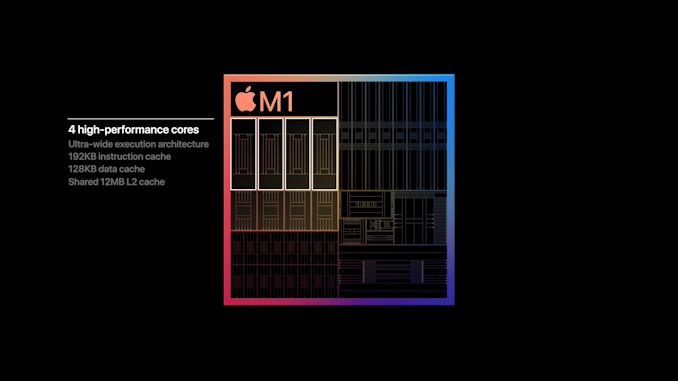

The new processor is called the Apple M1, the company’s first SoC designed with Macs in mind. With four large performance cores, four efficiency cores, and an 8-GPU core GPU, it features 16 billion transistors on a 5nm process node. Apple’s is starting a new SoC naming scheme for this new family of processors, but at least on paper it looks a lot like an A14X.

Today’s event contained a ton of new official announcements, but also was lacking (in typical Apple fashion) in detail. Today, we’re going to be dissecting the new Apple M1 news, as well as doing a microarchitectural deep dive based on the already-released Apple A14 SoC.

The Apple M1 SoC: An A14X for Macs

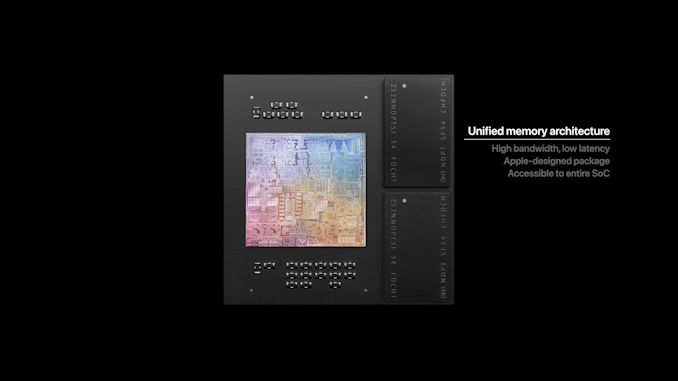

The new Apple M1 is really the start of a new major journey for Apple. During Apple’s presentation the company didn’t really divulge much in the way of details for the design, however there was one slide that told us a lot about the chip’s packaging and architecture:

This packaging style with DRAM embedded within the organic packaging isn't new for Apple; they've been using it since the A12. However it's something that's only sparingly used. When it comes to higher-end chips, Apple likes to use this kind of packaging instead of your usual smartphone POP (package on package) because these chips are designed with higher TDPs in mind. So keeping the DRAM off to the side of the compute die rather than on top of it helps to ensure that these chips can still be efficiently cooled.

What this also means is that we’re almost certainly looking at a 128-bit DRAM bus on the new chip, much like that of previous generation A-X chips.

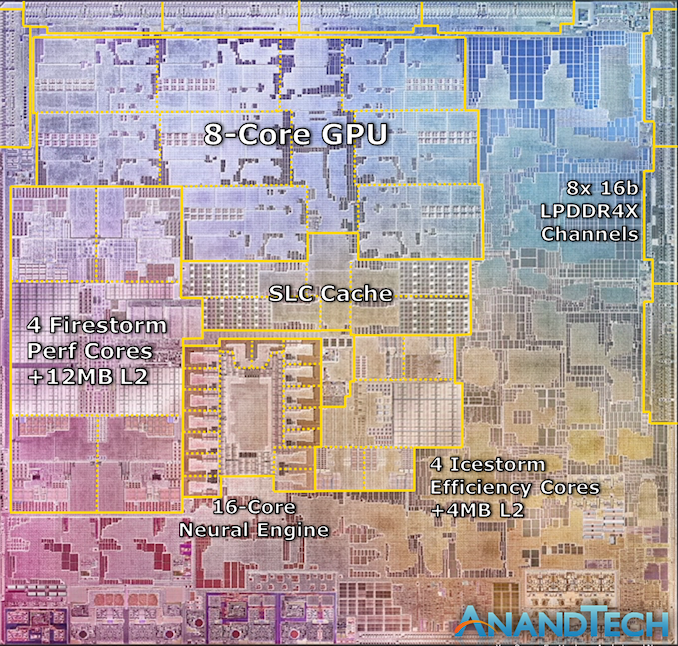

On the very same slide, Apple also seems to have used an actual die shot of the new M1 chip. It perfectly matches Apple’s described characteristics of the chip, and it looks looks like a real photograph of the die. Cue what's probably the quickest die annotation I’ve ever made:

We can see the M1’s four Firestorm high-performance CPU cores on the left side. Notice the large amount of cache – the 12MB cache was one of the surprise reveals of the event, as the A14 still only featured 8MB of L2 cache. The new cache here looks to be portioned into 3 larger blocks, which makes sense given Apple’s transition from 8MB to 12MB for this new configuration, it is after all now being used by 4 cores instead of 2.

Meanwhile the 4 Icestorm efficiency cores are found near the center of the SoC, above which we find the SoC’s system level cache, which is shared across all IP blocks.

Finally, the 8-core GPU takes up a significant amount of die space and is found in the upper part of this die shot.

What’s most interesting about the M1 here is how it compares to other CPU designs by Intel and AMD. All the aforementioned blocks still only cover up part of the whole die, with a significant amount of auxiliary IP. Apple made mention that the M1 is a true SoC, including the functionality of what previously was several discrete chips inside of Mac laptops, such as I/O controllers and Apple's SSD and security controllers.

The new CPU core is what Apple claims to be the world’s fastest. This is going to be a centre-point of today’s article as we dive deeper into the microarchitecture of the Firestorm cores, as well look at the performance figures of the very similar Apple A14 SoC.

With its additional cache, we expect the Firestorm cores used in the M1 to be even faster than what we’re going to be dissecting today with the A14, so Apple’s claim of having the fastest CPU core in the world seems extremely plausible.

The whole SoC features a massive 16 billion transistors, which is 35% more than the A14 inside of the newest iPhones. If Apple was able to keep the transistor density between the two chips similar, we should expect a die size of around 120mm². This would be considerably smaller than past generation of Intel chips inside of Apple's MacBooks.

Road To Arm: Second Verse, Same As The First

Section by Ryan Smith

The fact that Apple can even pull off a major architectural transition so seamlessly is a small miracle, and one that Apple has quite a bit of experience in accomplishing. After all, this is not Apple’s first-time switching CPU architectures for their Mac computers.

The long-time PowerPC company came to a crossroads around the middle of the 2000s when the Apple-IBM-Motorola (AIM) alliance, responsible for PowerPC development, increasingly struggled with further chip development. IBM’s PowerPC 970 (G5) chip put up respectable performance numbers in desktops, but its power consumption was significant. This left the chip non-viable for use in the growing laptop segment, where Apple was still using Motorola’s PowerPC 7400 series (G4) chips, which did have better power consumption, but not the performance needed to rival what Intel would eventually achieve with its Core series of processors.

And thus, Apple played a card that they held in reserve: Project Marklar. Leveraging the flexibility of the Mac OS X and its underlying Darwin kernel, which like other Unixes is designed to be portable, Apple had been maintaining an x86 version of Mac OS X. Though largely considered to initially have been an exercise in good coding practices – making sure Apple was writing OS code that wasn’t unnecessarily bound to PowerPC and its big-endian memory model – Marklar became Apple’s exit strategy from a stagnating PowerPC ecosystem. The company would switch to x86 processors – specifically, Intel’s x86 processors – upending its software ecosystem, but also opening the door to much better performance and new customer opportunities.

The switch to x86 was by all metrics a big win for Apple. Intel’s processors delivered better performance-per-watt than the PowerPC processors that Apple left behind, and especially once Intel launched the Core 2 (Conroe) series of processors in late 2006, Intel firmly established itself as the dominant force for PC processors. This ultimately setup Apple’s trajectory over the coming years, allowing them to become a laptop-focused company with proto-ultrabooks (MacBook Air) and their incredibly popular MacBook Pros. Similarly, x86 brought with it Windows compatibility, introducing the ability to directly boot Windows, or alternatively run it in a very low overhead virtual machine.

The cost of this transition, however, came on the software side of matters. Developers would need to start using Apple’s newest toolchains to produce universal binaries that could work on PPC and x86 Macs – and not all of Apple’s previous APIs would make the jump to x86. Developers of course made the jump, but it was a transition without a true precedent.

Bridging the gap, at least for a bit, was Rosetta, Apple’s PowerPC translation layer for x86. Rosetta would allow most PPC Mac OS X applications to run on the x86 Macs, and though performance was a bit hit-and-miss (PPC on x86 isn’t the easiest thing), the higher performance of the Intel CPUs helped to carry things for most non-intensive applications. Ultimately Rosetta was a band-aid for Apple, and one Apple ripped off relatively quickly; Apple already dropped Rosetta by the time of Mac OS X 10.7 (Lion) in 2011. So even with Rosetta, Apple made it clear to developers that they expected them to update their applications for x86 if they wanted to keeping selling them and to keep users happy.

Ultimately, the PowerPC to x86 transitions set the tone for the modern, agile Apple. Since then, Apple has created a whole development philosophy around going fast and changing things as they see fit, with only limited regard to backwards compatibility. This has given users and developers few options but to enjoy the ride and keep up with Apple’s development trends. But it has also given Apple the ability to introduce new technologies early, and if necessary, break old applications so that new features aren’t held back by backwards compatibility woes.

All of this has happened before, and it will all happen again starting next week, when Apple launches their first Apple M1-based Macs. Universal binaries are back, Rosetta is back, and Apple’s push to developers to get their applications up and running on Arm is in full force. The PPC to x86 transition created the template for Apple for an ISA change, and following that successful transition, they are going to do it all over again over the next few years as Apple becomes their own chip supplier.

A Microarchitectural Deep Dive & Benchmarks

In the following page we’ll be investigating the A14’s Firestorm cores which will be used in the M1 as well, and also do some extensive benchmarking on the iPhone chip, setting the stage as the minimum of what to expect from the M1:

644 Comments

View All Comments

pcordes - Thursday, November 19, 2020 - link

Thanks for the microarchitectural testing and details!However, some current Intel / AMD numbers you use for comparison aren't right. (Also, your ROB-size and load/store buffers graphs are missing labels on the vertical axis; I assume that's time or cycles or something with.)

Intel since Skylake has 5-wide legacy decode (up from 4-wide in Haswell) and 6-wide fetch from the decoded-uop cache. (The issue/rename stage is still only 4-wide in Skylake, but widened to 5 in Ice Lake. Being wider earlier in the front-end can catch up after stalls, letting buffers between stages hide bubbles) https://en.wikichip.org/wiki/intel/microarchitectu...

https://en.wikichip.org/wiki/intel/microarchitectu...

(The decoders can also macro-fuse a cmp/jcc branch into 1 uop, so max decode throughput is actually 7 x86 instructions per clock, into 5 uops.)

AMD Zen 2 can decode up to 4 x86 instructions per clock. (Not sure if that includes fusion of cmp/jcc or not). This is probably where you got your 4-wide number that you claimed applied to Intel. But that's just legacy-decode. Most code spends a lot of time in non-huge loops, and they can run from the uop cache. Zen 2's decoded-uop cache can produce up to 8 uops/clock.

https://en.wikichip.org/wiki/amd/microarchitecture...

The actual bottleneck for sending instructions into the out-of-order back-end is the issue/rename stage as usual: 6 uops, but I think those can only come from up to 5 x86 instructions. I thought I remembered reading that Zen 1 could only sustain 6 uops / clock when running code that included some AVX 256-bit instructions or other 2-uop instructions. Maybe that changed with Zen2 (where most 256-bit SIMD instructions are still 1 uop), I don't have an AMD system to test on, and stuff like https://uops.info/ only tests throughput of single instructions, not a mix of integer, FP, and/or loads/store.

Anyway, Zen's front-end is at least 5-wide, and 6-wide for at least some purposes.

---

You seem to be saying M1 can do 4 FADDs *and* 4 FMULs in the same cycle. That doesn't make any sense with "only" 4 FP execution units. Perhaps you mean 4 FMAs per cycle? Or can each execution unit really accept 2 instructions in the same clock cycle, like Pentium 4's double-pumped integer ALUs?

That's only twice the throughput of Haswell/Skylake, or the same throughput if you take vector width into account, assuming Apple M1 doesn't have ARM SVE for wider vectors.

(Skylake has FMA units on ports 0 and 1, each 256-bit wide. FP mul / add also run on those same units, all with 4-cycle latency. So using FMAs instead of `vaddps` or `vmulps` gives Skylake twice the FLOPS because an FMA counts as two FLOPs, despite being a single operation for the pipeline.)

Zen2 runs vaddps on ports FP2 / FP3, and vmulps or vfma...ps on FP0 / FP1. So it can sustain 2/clock FADD *and* 2/clock FMUL/FMA, unlike Skylake that can only do a total of 2 FP ops per cycle. (Both with any width from scalar to 256-bit). Zen1 has the same port allocations, but the execution units are only 128 bits wide. (Numbers from https://uops.info/)

https://en.wikichip.org/wiki/amd/microarchitecture... doesn't indicate any more FMA or SIMD FP mul/add throughput, except reduced competition from FP store and FP->int.

You weren't looking at actual legacy x87 `fadd` / `fmul` instruction mnemonics were you? Modern x86 does FP math using SSE / AVX instructions like scalar addsd / mulsd (sd = scalar double), with fewer execution units for legacy 80-bit x87. (Unfortunately FMA isn't baseline, only available as an extension, unlike with AArch64.)

LYP - Sunday, May 23, 2021 - link

I'm happy that I'm not the only one who thinks there is something wrong here ...peevee - Wednesday, December 9, 2020 - link

"Intel has stagnated itself out of the market, and has lost a major customer today."A decade+ concentrating on "diversity and inclusion" vs competency can do that to you. Their biggest problem today might be the Portland location and culture.

IntoGraphics - Wednesday, December 16, 2020 - link

<blockquote>"If Apple’s performance trajectory continues at this pace, the x86 performance crown might never be regained."</blockquote>If Apple's performance trajectory does continue at this pace, the x86 performance crown will be irrelevant.