Amazon's Arm-based Graviton2 Against AMD and Intel: Comparing Cloud Compute

by Andrei Frumusanu on March 10, 2020 8:30 AM EST- Posted in

- Servers

- CPUs

- Cloud Computing

- Amazon

- AWS

- Neoverse N1

- Graviton2

Memory Subsystem & Latency

Memory performance in server chips is absolutely crucial due to the sheer core count in the system. Amazon’s Graviton2 chip has the most modern memory capabilities of our test set thanks to 8 DDR4-3200 memory controllers, providing up to a theoretical 204GB/s peak bandwidth. What’s also important, is the SoC’s cache hierarchy and the latencies it’s able to access data at.

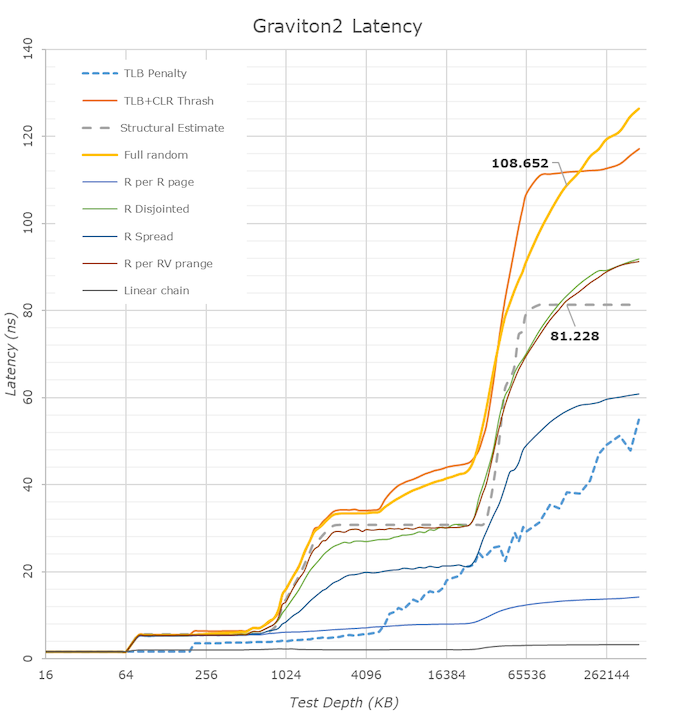

Looking at the linear latency graph results, let’s first focus on the DRAM region and see how the Graviton2 ends up relative to the competition.

Surprisingly enough, the Graviton2 does extremely well. Although the cache hierarchies between the designs are very different, when looking at an arbitrary 128MB memory depth, the three systems are near identical. We do see that the Graviton2’s full random latency increases at a higher rate the deeper into DRAM you compare it against the AMD and Intel systems. The structural memory latency between the Amazon and AMD chips are near identical, meaning the AMD system doing better further down in random accesses probably is due to better TLBs or page-table walkers.

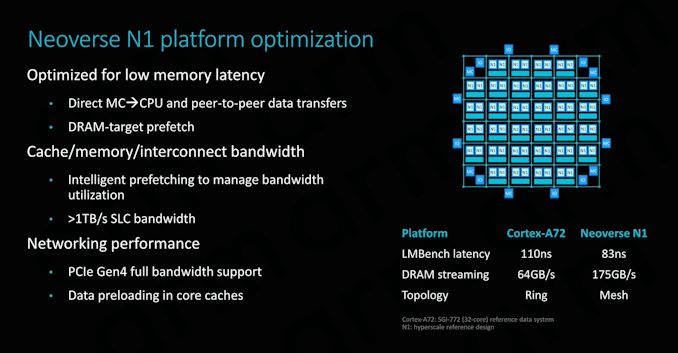

Our measured 81ns structural estimate figure here almost directly matches up with Arm’s published 83ns figure from a year ago, further giving credence to Arm’s published figures from back then (Arm's figure was LMBench random using hugepages, we're accounting for TLB misses in our patterns with 4KB pages).

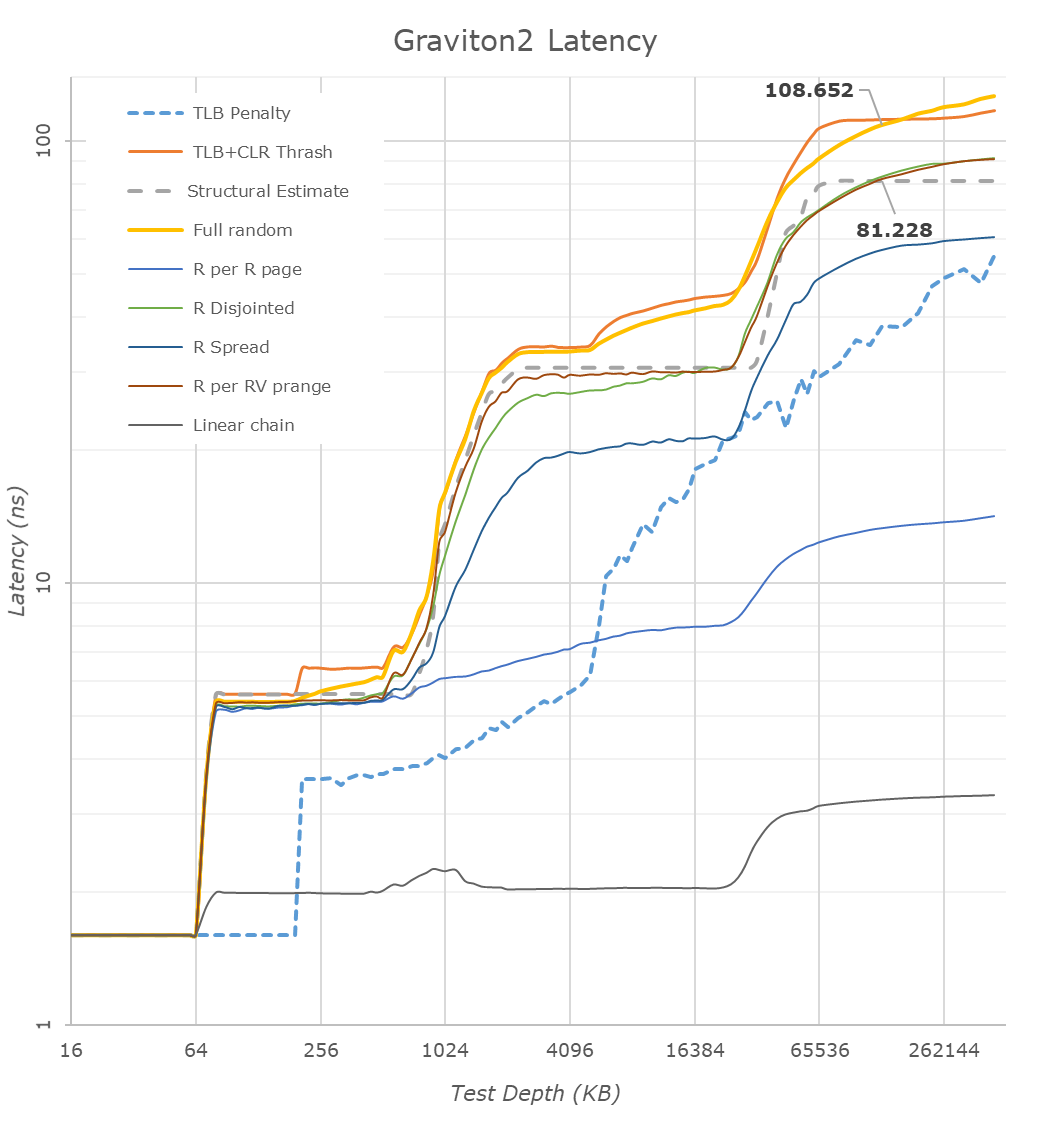

Turning to a logarithmical representation of the same data, we better see the difference in the cache hierarchy.

Compared to the AMD and Intel CPUs, we see the N1 cores’ advantage in the doubled 64KB L1D cache. Access latencies between the different cores should be 4 cycles, with the absolute figures in nanoseconds only differing due to the clock frequency differences between the cores.

The L2 cache of the Graviton2 falls in at 1MB and the access latency here is also competitive at 11 cycles. Arm gives the option between a 512KB 9 cycle or a 1MB 11 cycle configuration, and Amazon’s designers here chose the latter option. Halfway through the 1MB L2 cache we see the latencies of some access patterns increase, and this is due to the test exceeding the capacity of the L1 TLB which falls in at 48 pages (192KB coverage) for the N1 cores, also resulting in the big jump in the TLB miss penalty curve. AMD and Intel here go up to 64 pages and 256KB coverage. To be noted in these results is AMD’s prefetchers pulling into L2, whereas Arm and Intel cores only pull into L3 for more complex patterns.

Going beyond the L2, we reach the L3 where we’re able to test Arm’s CMN-600 mesh interconnect for the first time. The cache hierarchy covers 32MB depth; the interesting aspect here is that the latency remains relatively flat and within 2ns when testing some patterns between 3MB and 32MB, meaning there's fine-grained access hashing across the chip's slices.

The average estimate structural latency of the cache falls in at around 29.6ns, which isn’t all too great when compared to Intel’s ~18.9ns L3 cache, even considering that this is split up across 32 slices versus Intel’s 24 slices. Of course, AMD’s L3 leads here at only 10.6ns, but that’s only shared within 4 CPU cores and doesn’t go nearly as deep.

What we’re also seeing here is that the Graviton2’s N1 cores prefetchers aren’t set up to be nearly as aggressive in some more complex patterns than what we saw in its mobile Cortex-A76 siblings; it’s likely that this was done on purpose to avoid unnecessary memory traffic on the chip, as with 64 cores you’re going to be very bandwidth starved, and you don’t want to waste any of that on possible mis-prefetching.

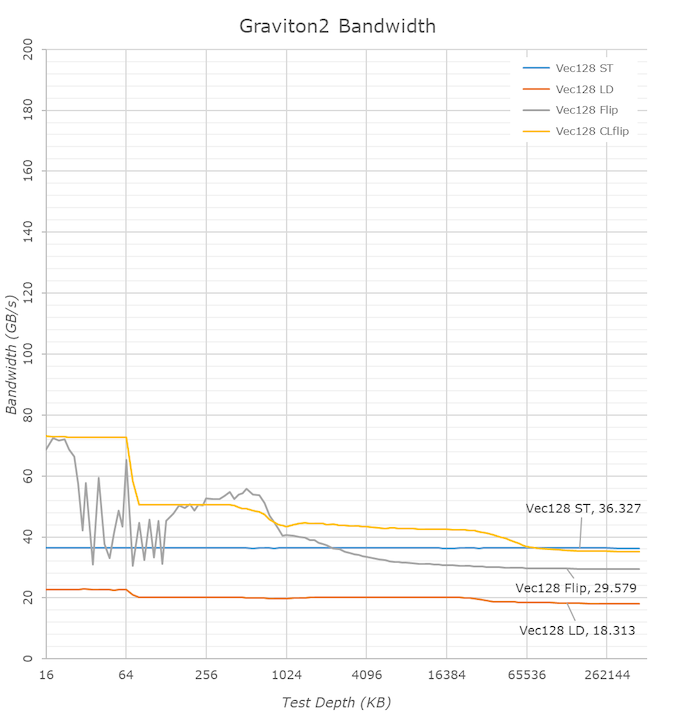

Moving onto bandwidth testing, we’re solely looking at single-core bandwidth here.

Things are looking massively impressive for the Graviton2’s Neoverse N1 cores as a single CPU core is able to stream writes at up to 36GB/s. What interesting here is that the N1 cores like the Cortex-A76 cores here take advantage of the relaxed memory ordering of the Arm architecture to essentially behave the same as non-temporal writes would on an x86 system, and that’s why the bandwidth if flat across the whole test depth.

Loading from memory achieves up to 18.3GB/s and memory copy (flip test) achieves an impressive 29.57GB/s, which is more than double what’s achieved on the AMD system, and almost triple the Intel system. From a single-core perspective, it seems that the Arm design is able to have significantly better memory capabilities.

We’re still seeing the odd zig-zagging behaviour in the L1 and L2 caches for memory copies that we saw on mobile A76 based chips, possibly cache bank access conflicts for this particular test that showcase in Arm's new microarchitecture.

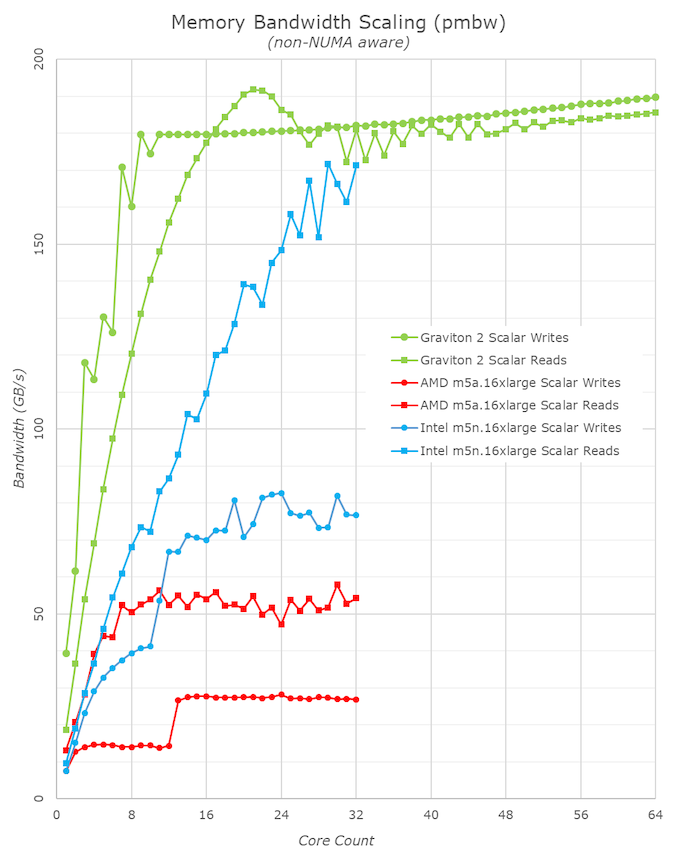

I didn’t have a proper good multi-core bandwidth test available in my toolset (going to have to write one), so fell back to Timo Bingmann’s PMBW test for some quick numbers on the memory bandwidth scaling of the Graviton2.

The AMD and Intel systems here aren’t quite representative as the test isn’t NUMA aware and that adds a bit of complexity to the matter – as mentioned, we’ll need to write a new custom tool that’s a bit more flexible and robust.

The Arm chip is quite impressive, and we only seemingly needed 8 CPU cores to saturate the write bandwidth of the system, and only 16 cores for the read bandwidth, with the highest figure reaching about 190GB/s, near the theoretical 204GB/s peak of the system, and this is only using scalar 64B accesses. Very impressive.

96 Comments

View All Comments

eastcoast_pete - Tuesday, March 10, 2020 - link

While I am currently not in the market for such cloud computing services aside from maybe some video processing, I for one welcome the arrival of a competitive non-x86 solution! Can only make life better and cheaper when and if I do. Also, ARM N1 arch lighting a fire under the x86 makers in their easy chairs will keep AMD and Intel on their feet, and that advance will filter down to my future desktops and laptops.eastcoast_pete - Tuesday, March 10, 2020 - link

Thanks Andrei! Just out of curiosity, that "noisy neighbor" behavior you saw on the Xeon? I know it's mostly speculation, but would you expect this if someone is running AVX512 on neighboring cores? AVX512 is very powerful if applications can make use of it, but things get very toasty fast. Care to speculate?willgart - Tuesday, March 10, 2020 - link

where are the real life benchmarks???video encoding / decoding ?

database performance ?

web performance ?

https encryption ?

etc...

The_Assimilator - Thursday, March 12, 2020 - link

Agreed 100%. Without figures of actual real-world applications compiled with actual real-world compilers handling actual real-world workloads, this essentially amounts to an advertorial for Amazon, Graviton2 and Arm.Danvelopment - Wednesday, March 11, 2020 - link

This may sound stupid as I'm just getting into AWS as backup throughput for local servers on my web project that releases April."If you’re an EC2 customer today, and unless you’re tied to x86 for whatever reason, you’d be stupid not to switch over to Graviton2 instances once they become available, as the cost savings will be significant."

How do you know whether what you're using is Intel, AMD or Graviton(1/2)? (I'm using T2s right now with no weighting and if our release gets hit hard, will give it weight and and increase its capacity).

As they're not actually doing anything, then I'd have no issue switching over, but can't tell what I'm on.

CampGareth - Wednesday, March 11, 2020 - link

There's a list here: https://aws.amazon.com/ec2/instance-types/If you're on T2 instances you're on Intel chips at the moment.

Quantumz0d - Wednesday, March 11, 2020 - link

No real benchmark. Another SPEC Whiteknighting. I see the AT forums Apple CPU thread being getting creamed over this again.ARM is a lockdown POS. You can't even buy them in this case. Altera CPU didn't even came to STH for comparision where it had so many cores against x86 parts. You cannot get them running majority of the consumer workload. One can claim Power from IBM has SMT8 and first Gen4 and all but if its not consumer centric it won't generate much of profit.

Author seems to love ARM for some reason and hate x86. Its been since Apple articles but in real time we saw how iPhone gets decimated in speed comparison against Android Flagships running the stone age Qualcomm. We have seen this ARM dethroning x86 numerous times and failed. I hope this also fails, a non standard CPU leaves all fun out of equation. And needs emulation for consumer use which slows down performance.

People want to see all the workloads. Not SPEC. Also where is EPYC Rome comparision Nowhere. Soon Milan is going to hit. Glad that AMD is alive. This stupid ARM BGA dumpster should be dead in its infancy.

Wilco1 - Wednesday, March 11, 2020 - link

LOL - someone feels extremely threatened by Arm servers...Mission accomplished!

anonomouse - Wednesday, March 11, 2020 - link

Well that was bizarrely incoherent. What workloads would you want to see instead? Nothing else you wrote made any sense or had any facts behind it.Andrei Frumusanu - Wednesday, March 11, 2020 - link

He's been doing it for the last year or two, ignore it.