Enterprise SATA SSDs: Can Budget 2020 beat Top Line 2017?

by Billy Tallis on February 4, 2020 11:00 AM ESTPeak Throughput

For client/consumer SSDs we primarily focus on low queue depth performance for its relevance to interactive workloads. Server workloads are often intense enough to keep a pile of drives busy, so the maximum attainable throughput of enterprise SSDs is actually important. But it usually isn't a good idea to focus solely on throughput while ignoring latency, because somewhere down the line there's always an end user waiting for the server to respond.

In order to characterize the maximum throughput an SSD can reach, we need to test at a range of queue depths. Different drives will reach their full speed at different queue depths, and increasing the queue depth beyond that saturation point may be slightly detrimental to throughput, and will drastically and unnecessarily increase latency. Because of that, we are not going to compare drives at a single fixed queue depth. Instead, each drive was tested at a range of queue depths up to the excessively high QD 512. (SATA drives are limited to QD32, but we're also using this test suite for NVMe drives.) For each drive, the queue depth with the highest performance was identified. Rather than report that value, we're reporting the throughput, latency, and power efficiency for the lowest queue depth that provides at least 95% of the highest obtainable performance. This often yields much more reasonable latency numbers, and is representative of how a reasonable operating system's IO scheduler should behave. (Our tests have to be run with any such scheduler disabled, or we would not get the queue depths we ask for.)

Unlike last year's enterprise SSD reviews, we're now using the new io asynchronous IO APIs on Linux instead of the simpler synchronous APIs that limit software to one outstanding IO per thread. This means we can hit high queue depths without loading down the system with more threads than we have physical CPU cores, and that leads to much better latency metrics—but the impact on SATA drives is minimal because they are limited to QD32. Our new test suite will use up to 16 threads to issue IO.

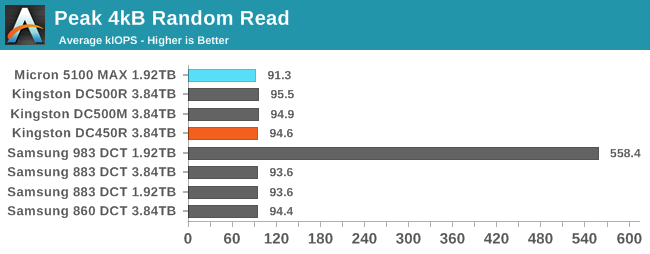

Peak Random Read Performance

These SATA drives all have no trouble saturating the SATA link with 4kB random reads at high queue depths. The Micron 5100 MAX is technically the slowest of the bunch, but it's less than a 5% difference. That pales in comparison to the factor of 6 throughput improvement made possible by moving up to a NVMe drive. (Though to be fair, the NVMe drive peaks at a much higher queue depth of at least 80. At QD32, it's merely 3x faster than the SATA SSDs.)

|

|||||||||

| Power Efficiency in kIOPS/W | Average Power in W | ||||||||

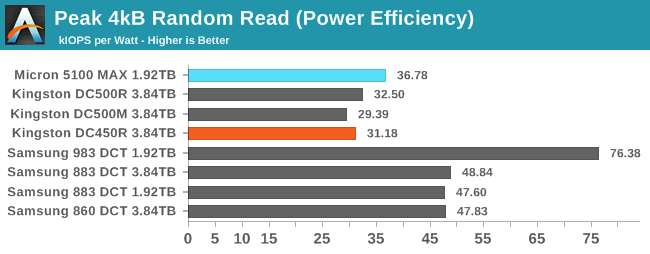

Peak random read performance may be very similar between these SATA drives, but there's still considerable variation in their power consumption and thus efficiency. The Samsung drives are the most efficient as usual, hovering just below 2W for this test compared to 2.5W for the Micron 5100 MAX and 3W for the Kingston drives.

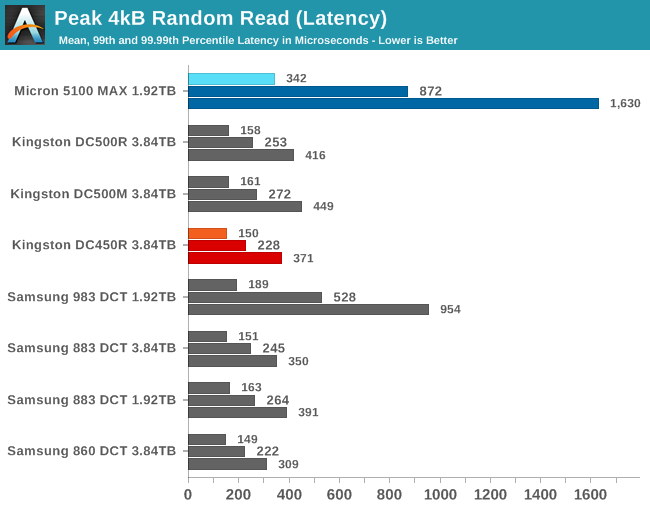

The Micron 5100 MAX has the worst latency scores of the bunch, the same outcome as for the QD1 random read test. Its tail latencies are much higher than any of the other SATA drives. The Samsung 983 DCT has the second-worst latency scores, but that's because it's operating at a much higher queue depth in order to hit 6x throughput. The Kingston DC450R actually manages slightly better latency scores than the other two Kingston drives, and that puts it on par with the Samsung SATA drives.

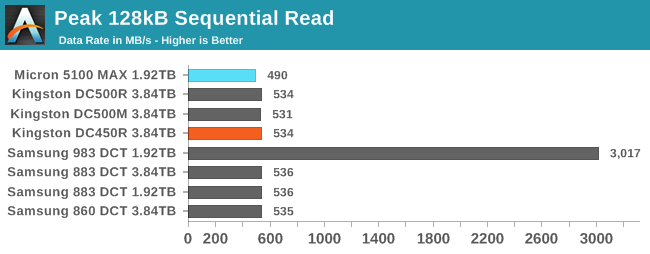

Peak Sequential Read Performance

Rather than simply increase the queue depth of a single benchmark thread, our sequential read and write tests first scale up the number of threads performing IO, up to 16 threads each working on different areas of the drive. This more accurately simulates serving up different files to multiple users, but it reduces the effectiveness of any prefetching the drive is doing.

|

|||||||||

| Power Efficiency in MB/s/W | Average Power in W | ||||||||

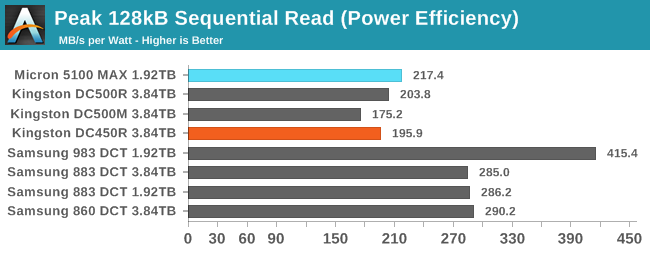

Moving up to higher queue depths doesn't really change the situation we saw with sequential reads at QD1. The SATA drives are all still performing about the same, with the Micron 5100 MAX a few percent slower than the more recent drives. That doesn't stop the 5100 MAX from being a bit more power efficient than the Kingston drives, but neither brand matches Samsung.

Steady-State Random Write Performance

Enterprise SSD write performance is conventionally reported as steady-state performance rather than peak performance. Sustained writing to a flash-based SSD usually causes performance to drop as the drive's spare area fills up and the SSD needs to spend some time on background work to clean up stale data and free up space for new writes. Conventional wisdom holds that writing several times the drive's capacity should be enough to get a drive to steady-state, because nobody actually ships SSDs with greater than 100% overprovisioning ratios. But in practice, things are a bit more complicated.

For starters, steady-state performance isn't necessarily worst-case performance. We've noticed several SSDs that show much worse random write performance if they were initially filled with sequential writes rather than random writes. Those drives actually speed up as they are preconditioned with random writes on their way to reaching steady state. Secondly, drives can be pretty good at recovering performance when they get any kind of respite from full-speed write pressure. So even though our enterprise test suite doesn't give SSDs any explicit idle time the way our consumer test suite does, running a read performance test or low-QD writes can give a drive the breathing room it needs to get caught up on garbage collection. We don't have the time to do several full drive writes for each queue depth tested (especially for slower SATA drives), so some of our write performance results end up surprisingly high compared to the drive's specifications. Real-world write performance depends not just on the current workload, but also on the recent history of how a drive has been used, and no single performance test can capture all the relevant effects.

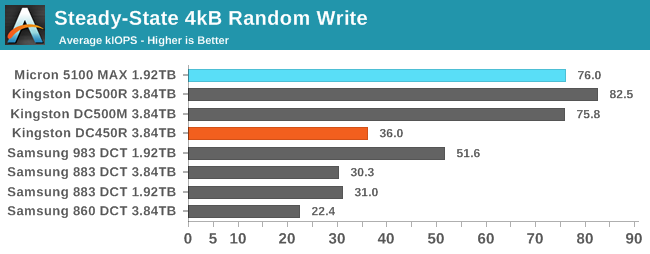

Thanks to its massive overprovisioning, it's no surprise to see the Micron 5100 MAX delivering great random write performance here, matching the Kingston DC500M. The Kingston DC500R performing even better is more of a surprise, and seems to be due more to the fact that our test procedure doesn't reliably keep it at steady-state; its random write performance under load definitely does drop down to the rated performance that is no better than the Samsung drives, but the structure of our automated tests didn't keep it at steady-state 100% of the time. Even the DC450R was caught only on the cusp of its steady-state, or else it would have scored only slightly better than the 860 DCT.

|

|||||||||

| Power Efficiency in kIOPS/W | Average Power in W | ||||||||

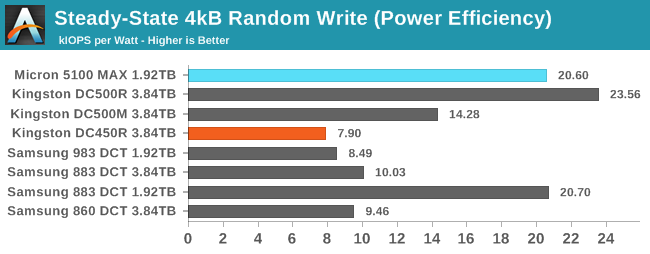

The drives with the highest random write performance also dominate the power efficiency chart for the most part. The Micron 5100 MAX scores very well here, as well it should. The Kingston DC500R's efficiency score is inflated by the fact that it hadn't fully reached steady-state; when it finally does, it and the DC450R end up drawing 5.5-6W and having half the performance per Watt of the next-worse drive in this batch. The 1.92 TB Samsung 883 DCT has surprisingly low power consumption here, indicating that it may have also not quite been in its lowest steady-state even though the performance was barely above its specifications.

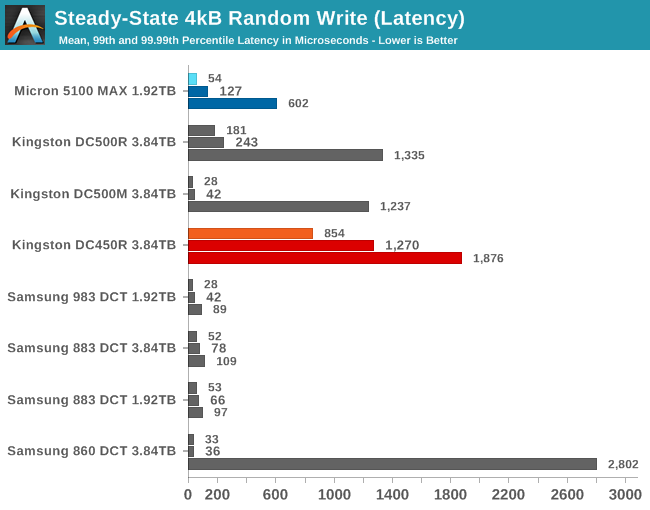

The Kingston SATA drives all have poor tail latency scores, and the DC450R's mean random write latency is pretty poor as well. It's possible these drives would show better QoS once they've fully reached steady state, but even if that's the case, it appears we've caught them in the midst of a rough transition toward steady-state. Despite being a very high-end drive for its time and being able to sustain excellent random write throughput, the Micron 5100 MAX also has poor QoS compared to the modern competition from Samsung.

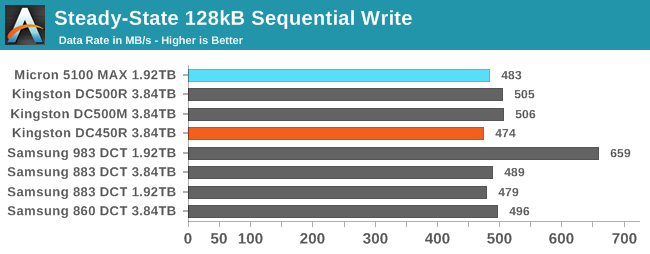

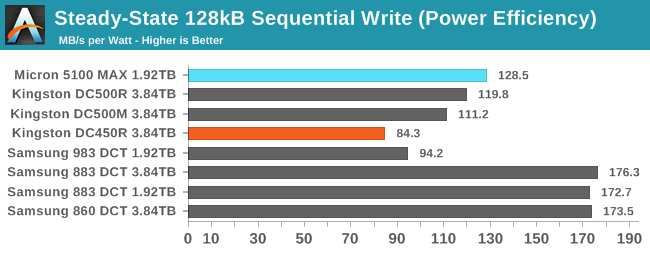

Peak Sequential Write Performance

As with our sequential read test, we test sequential writes with multiple threads each performing sequential writes to different areas of the drive. This is more challenging for the drive to handle, but better represents server workloads with multiple active processes and users.

The bottleneck presented by the SATA bus is clearly preventing these drives from standing out in terms of sequential write performance. However, the 983 DCT shows that drives with 2-4TB of flash aren't necessarily that much faster once the SATA bottleneck is out of the way.

|

|||||||||

| Power Efficiency in MB/s/W | Average Power in W | ||||||||

As usual, the Samsung SATA drives turn in the best power efficiency scores. The Micron 5100 MAX has only three-fourths the performance per Watt, and the Kingston drives are worse that that. The DC450R is in last place for efficiency, since it draws twice as much power as a Samsung 883 DCT but is slightly slower.

20 Comments

View All Comments

romrunning - Tuesday, February 4, 2020 - link

I understand the reason (batch of excess drives leftover from OEM) for using the Micro 5100 MAX, but for "enterprise" SSD use, I really would have liked to see Intel's DC-series (DC = Data Center) of enterprise SSDs compared.DanNeely - Tuesday, February 4, 2020 - link

This is a new benchmark suite, which means all the drives Billy has will need to be re-ran through it to get fresh numbers which is why the comparison selection is so limited. More drives will come in as they're retested; but looking at the bench SSD 2018 data it looks like the only enterprise SSDs Intel has sampled are Optanes.Billy Tallis - Tuesday, February 4, 2020 - link

This review was specifically to get the SATA drives out of the way. I also have 9 new enterprise NVMe drives to be included in one or two upcoming reviews, and that's where I'll include the fresh results for drives I've already reviewed like the Intel P4510, Optane P4800X and Memblaze PBlaze5.I started benchmarking drives with the new test suite at the end of November, and kept the testbed busy around the clock until the day before I left for CES.

romrunning - Wednesday, February 5, 2020 - link

I appreciate getting more enterprise storage reviews! Not enough of them, especially for those times when you might have some leeway on which storage to choose.pandemonium - Wednesday, February 5, 2020 - link

Agreed. I'm curious how my old pseudo enterprise Intel 750 would compare here.Scipio Africanus - Tuesday, February 4, 2020 - link

I love those old stock enterprise drives. Before I went NVME just a few months ago, my primary drive was a Samsung SM863 960gb which was a pumped up high endrance 850 Pro.shodanshok - Wednesday, February 5, 2020 - link

Hi Billy, thank for the review. I always appreciate similar article.That said, this review really fails to take into account the true difference between the listed enterprise disks, and this is due to a general misunderstanding on what powerloss protection does and why it is important.

In the introduction, you state that powerloss protection is a data integrity features. While nominally true, the real added value of powerloss protection is much higher performance for synchronized write workloads (ie: SQL databases, filesystem metadata update, virtual machines, etc). Even disks *without* powerloss protection can give perfect data integrity: this is achieved with flush/fsync (on application/OS side) and SATA write barrier/FUAs. Applications which do not use fsync will be unreliable even on drive featuring powerloss protection, as any write will be cached in the OS cache for a relatively long time (~1s on Windows, ~30s on Linux), unless opening the file in direct/unbuffered mode.

Problem is, synchronous writes are *really* expensive and slow, even for flash-backed drive. I have the OEM version of a fast M.2 Samsung 960 EVO NVMe drive and in 4k sync writes it show only ~300 IOPs. For unsynched, direct writes (ie: bypassing OS cache but using its internal DRAM buffer), it has 1000x the IOPs. To be fast, flash really needs write coalescing. Avoiding that (via SATA flushes) really wreack havok on the drive performance.

Obviously, not all writes need to be synchronous: most use cases can tolerate a very low data loss window. However, for application were data integrity and durability are paramount (as SQL databases), sync writes are absolutely necessary. In these cases, powerloss protected SSD will have performance one (or more) order of magnitude higher than consumer disks: having a non-volatile DRAM cache (thank to power capacitors), they will simply *ignore* SATA flushes. This enable write aggregation/coalescing and the relative very high performance advantage.

In short: if faced with a SQL or VM workload, the Kingston DC450R will fare very poorly, while the Micron 5100 MAX (or even the DC500R) will be much faster. This is fine: the DC450R is a read-intensive drive, not intended for SQL workloads. However, this review (which put it against powerloss protected drives) fails to account for that key difference.

mgiammarco - Thursday, February 6, 2020 - link

I agree perfectly finally someone that explains the real importance of power loss protection. Frankly speaking an "enterprise ssd" without plp and with low write endurance is really should be called "enterprise"? Which is the difference with a standard sdd?AntonErtl - Wednesday, February 5, 2020 - link

There is a difference between data integrity and persistency, but power-loss protection is needed for either.Data integrity is when your data is not corrupted if the system fails, e.g., from a poweroff; this needs the software (in particular the data base system and/or file system) to request the writes in the right order), but it also needs the hardware to not reorder the writes, at least as far as the persistent state is concerned. Drives without power-loss protection tend not to give guarantees in this area; some guarantee that they will not damage data written long ago (which other drives may do when they consilidate the still-living data in an erase block), but that's not enough for data integrity.

Persistency is when the data is at least as up-to-date as when your last request for persistency (e.g., fsync) was completed. That's often needed in server applications; e.g., when a customer books something, the data should be in persistent storage before the server sends out the booking data to the customer.

shodanshok - Wednesday, February 5, 2020 - link

fsync(), write barrier and SATA flushes/FUAs put strong guarantee on data integrity and durability even *without* powerloss protected drive cache. So, even a "serious" database running on any reliable disk (ie: one not lying about flushes) will be 100% functional/safe; however, performance will tank.A drive with powerloss protected cache will give much higher performance but, if the application is correctly using fsync() and the OS supports write barrier, no added integrity/durability capability.

Regarding write reordering: write barrier explicitly avoid that.