Zen and Vega DDR4 Memory Scaling on AMD's APUs

by Gavin Bonshor on June 28, 2018 9:00 AM EST- Posted in

- CPUs

- Memory

- G.Skill

- AMD

- DDR4

- DRAM

- APU

- Ryzen

- Raven Ridge

- Scaling

- Ryzen 3 2200G

- Ryzen 5 2400G

Integrated Gaming Performance

As stated on the first page, here we take both APUs from DDR4-2133 to DDR4-3466 and run our testing suite at each stage. For our gaming tests, we are only concerned with real-world resolutions and settings for these games. It would be fairly easy to adjust the settings in each game to a CPU limited scenario, however the results from such a test are mostly pointless and non-transferable to the real world in our view. Scaling takes many forms, based on GPU, resolution, detail levels, and settings, so we want to make sure the results correlate to what users will see day-to-day.

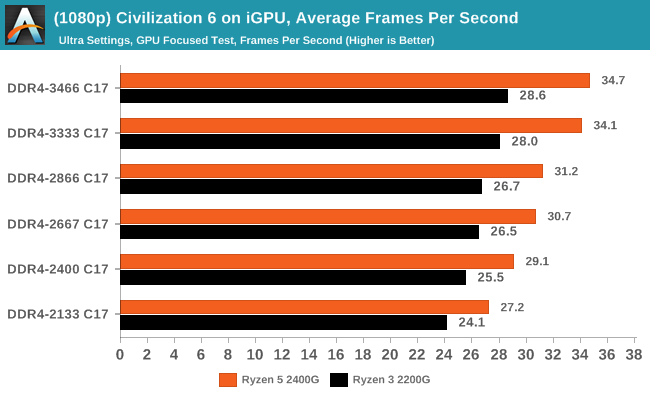

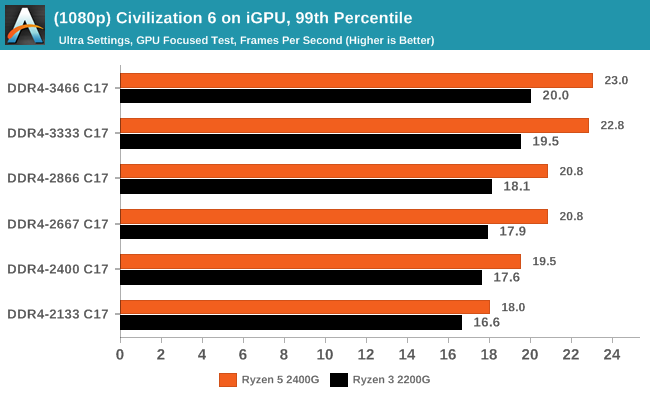

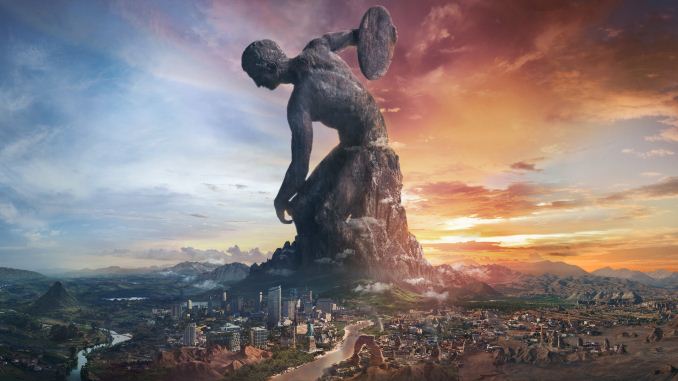

Civilization 6

First up in our APU gaming tests is Civilization 6. Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer underflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

Civilazation 6 certainly appreciates faster memory on integrated graphics, showing a +28% gain for the 2400G on average framerates, or a +13% gain when compared to the APU rated memory frequency (DDR4-2666).

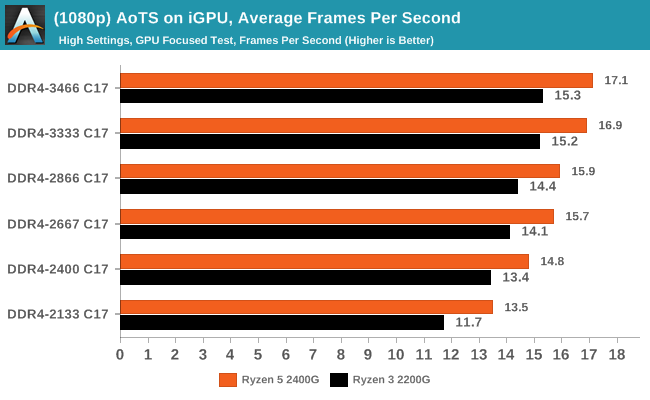

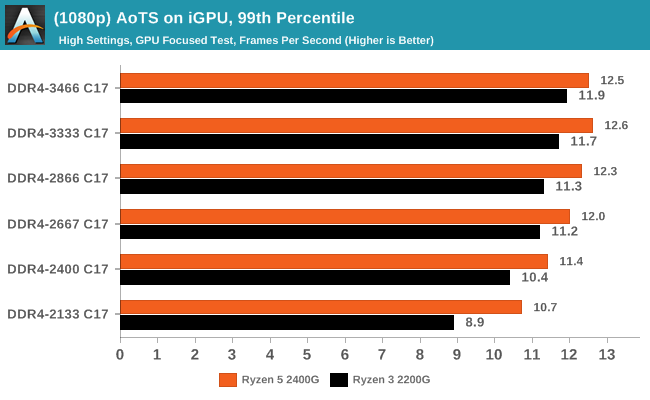

Ashes of the Singularity (DX12)

Seen as the holy child of DX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go and explore as many of the DX12 features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

In Ashes, both CPUs saw a 26-30% gain in frame rates moving from the slow to fast memory, which is also seen in the percentile numbers.

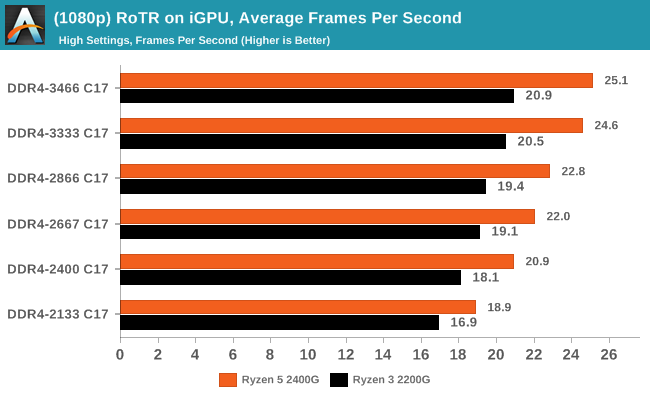

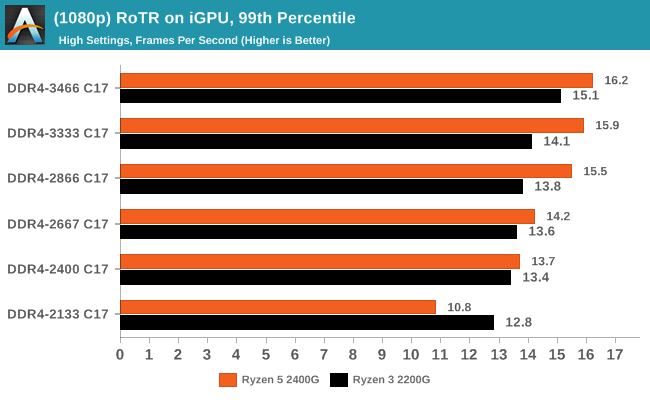

Rise Of The Tomb Raider (DX12)

One of the newest games in the gaming benchmark suite is Rise of the Tomb Raider (RoTR), developed by Crystal Dynamics, and the sequel to the popular Tomb Raider which was loved for its automated benchmark mode. But don’t let that fool you: the benchmark mode in RoTR is very much different this time around. Visually, the previous Tomb Raider pushed realism to the limits with features such as TressFX, and the new RoTR goes one stage further when it comes to graphics fidelity. This leads to an interesting set of requirements in hardware: some sections of the game are typically GPU limited, whereas others with a lot of long-range physics can be CPU limited, depending on how the driver can translate the DirectX 12 workload.

Both CPUs saw big gains in RoTR, however it is interesting to note that the 2400G gained margin over the 2200G: at DDR4-2133, the difference between the two APUs was 12%, however with the fast memory that difference grew to +20%.

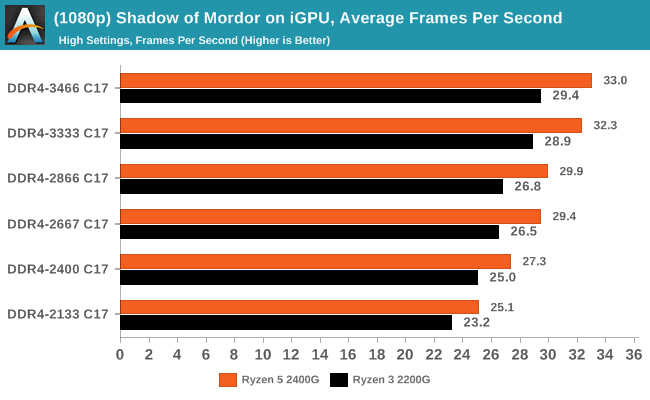

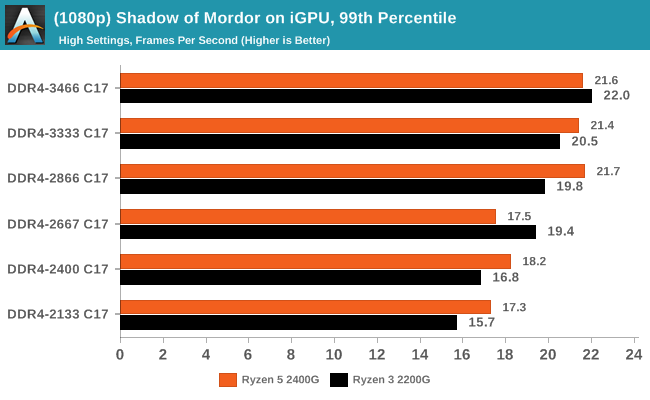

Shadow of Mordor

The next title in our testing is a battle of system performance with the open world action-adventure title, Middle Earth: Shadow of Mordor (SoM for short). Produced by Monolith and using the LithTech Jupiter EX engine and numerous detail add-ons, SoM goes for detail and complexity. The main story itself was written by the same writer as Red Dead Redemption, and it received Zero Punctuation’s Game of The Year in 2014.

Shadow of Mordor also saw results rise from 26-32% for average frame rates, while the percentiles are a different story. The Ryzen 5 2400G seemed to top our at DDR4-2866, while the Ryzen 3 2200G was able to keep going and then beat the other APU. This is despite the fact that the 2200G has less graphical horsepower than the 2400G.

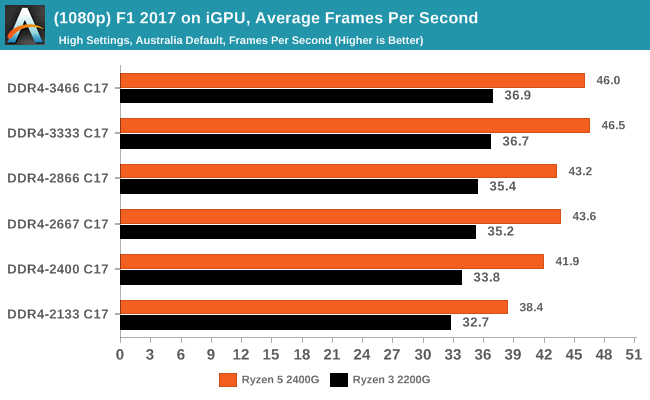

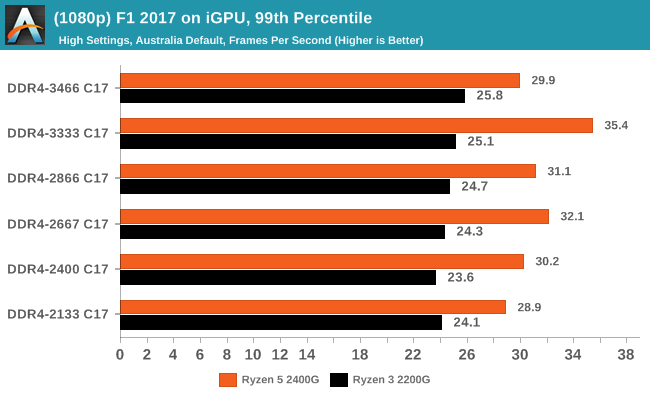

F1 2017

Released in the same year as the title suggests, F1 2017 is the ninth variant of the franchise to be published and developed by Codemasters. The game is based around the F1 2017 season and has been and licensed by the sports official governing body, the Federation Internationale de l’Automobile (FIA). F1 2017 features all twenty racing circuits, all twenty drivers across ten teams and allows F1 fans to immerse themselves into the world of Formula One with a rather comprehensive world championship season mode.

Codemasters game engines are usually very positive when memory frequency comes into play, and although positive generally in F1 2017, it didn't seem to affect performance as much as expected. While average frame rates showed a gradual rise in performance through the straps, the Ryzen 5 2400G 99th percentile results were all over the place and not consistent at all.

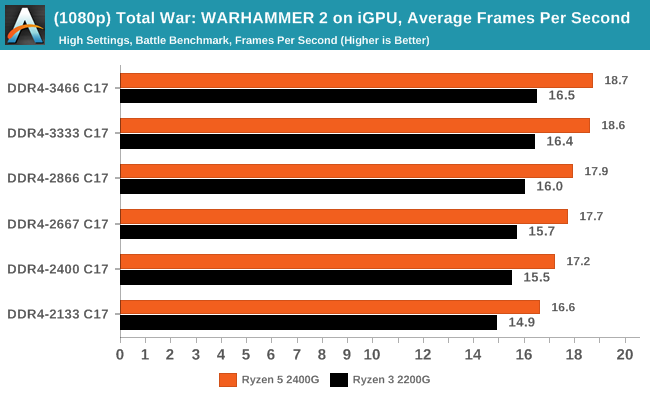

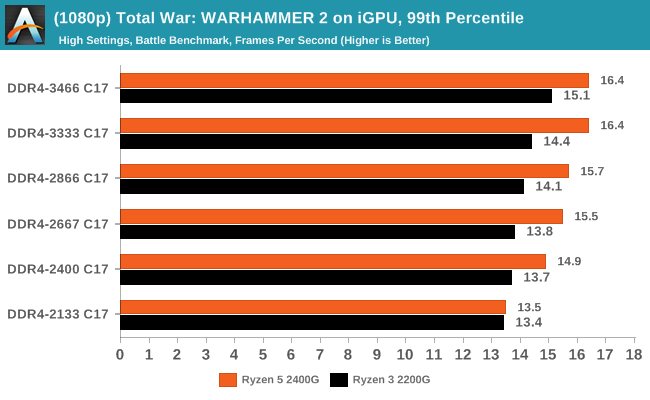

Total War: WARHAMMER 2

Not only is the Total War franchise one of the most popular real-time tactical strategy titles of all time, but Sega delve into multiple worlds such as the Roman Empire, Napoleonic era and even Attila the Hun, but more recently they nosedived into the world of Games Workshop via the WARHAMMER series. Developers Creative Assembly have used their latest RTS battle title with the much talked about DirectX 12 API, just like the original version, Total War: WARHAMMER, so that this title can benefit from all the associated features that comes with it. The game itself is very CPU intensive and is capable of pushing any top end system to their limits.

74 Comments

View All Comments

PeachNCream - Thursday, June 28, 2018 - link

Oh wow, that's a terrible result! Thanks for sharing the information. I was expecting something more like 25% less performance in worst case scenarios, but that was clearly optimistic.Lolimaster - Friday, June 29, 2018 - link

GT1030 2133 DDR4 is basically 3x less bandwidth than the GDDR5 version which like giving single channel DDR4 2133 on an APU.TheWereCat - Saturday, June 30, 2018 - link

The funny thing is that even if it had "only" 25% less performance, the price difference (at least im my country) is only €4, so it wouldnt make sense anyway.eastcoast_pete - Thursday, June 28, 2018 - link

Yes, thanks for that. I looked it up. That was REALLY BAD. The 1030 DDR4 card paired with a i7700K got a serious butt kicking by the stock Ryzen 2200G with dual channel. The only thing slightly slower than that 1030 setup was if they hogtied the 2200G with single channel memory only, which one would only do if truly desparate. Eye opening, really. Much better off with the 2200G (CPU+iGPU) for about the price of the 1030DDR4 dGPU alone. Nvidia really made one stinker of a card here.Lolimaster - Friday, June 29, 2018 - link

With DDR4 the GT1030 can lose so much performance than even the 2200G walks over it.Lolimaster - Friday, June 29, 2018 - link

Simple, 64bit + DDR vs dual channel DDR on the APU.29a - Thursday, June 28, 2018 - link

Please remove 3DPM from the benchmark suite, it would have been much better to include video encoding benchmarks. Seems the 3DPM benchmark is only included for ego purposes because as usual it offered no beneficial information.boeush - Thursday, June 28, 2018 - link

Yeah, for budget/consumer CPUs/APUs such science/engineering oriented benchmarks seem to be off-topic. Conversely, if you're going to test STEAM aspects, then commit all the way and include the Chrome compilation benchmark, and maybe also include something about molecular dynamics and electronic circuit simulation...lightningz71 - Thursday, June 28, 2018 - link

First of all, thank you for putting together this series of articles. I really respect the time and effort that went into all of this. I don't know if you have any more articles planned, but I would like to offer some constructive criticism.My first point is that you claim to focus on real world scenarios and expectations for the two processors, then proceeded to go right into a scenario that was anything but. The vast majority of the benchmarks that were run were set to 1080p at high detail. It is widely accepted that the performance target of the two processors is either 720p at mid-high detail or 1080p at very low detail. Most people aim for about 60fps for these budget solutions to be considered well playable. Most of your gaming benchmarks never made it north of 30fps.

My second point is that this review neglected to show what the processors would achieve with memory scaling while the iGPU is also overclocked. If the user is going to go to the effort to turn their memory clocks up to 3400 Mxfrs, they are also very likely to overclock their iGPU at the same time. Another point in support of this is the unusual memory scaling that was seen between the highest two memory click settings. The higher clocked iGPU case might have made better use of the higher clocked memory and shown more linear scaling.

I suggest that you make a follow on article that focuses on the gaming and content creation aspects with both processors that is run at both 720p high and 1080p low with the iGPU at stock and overclocked to 1500mhz. I suspect that the numbers would be very enlightening.

Again, thank you. I hope that you can take the time to look at my suggestions and try a follow on article.

eastcoast_pete - Thursday, June 28, 2018 - link

Yes, thanks to Gavin for this continued exploration of the Ryzen chips with Vega iGPUs. Glad to see that your hand has healed up.About Lightningz71's points: +1. I fully agree, the real world scenario for anyone who goes through the trouble to boost the memory clock would be to OC the iGPUs to 1500 MHz and maybe even higher. A German site had a test where they sort of did that, found some strange unstable behavior at mild overclock of the iGPUs, but regained stability once they overvolted a bit more and hit 1450 MHz and up. They did find quite substantial increases in frame rates, making some games playable at settings that previously failed to get even close to 30fps. But, their 2200G and 2400G Ryzens were on stock heatsinks and NOT delidded, so I have even higher hopes for Gavin's setups.

Lastly, given the current crazy high prices for memory, I'd love to see at least some data for 2x4 Gb, representing the true ~$300 potato with a 2200G.