Investigating Cavium's ThunderX: The First ARM Server SoC With Ambition

by Johan De Gelas on June 15, 2016 8:00 AM EST- Posted in

- SoCs

- IT Computing

- Enterprise

- Enterprise CPUs

- Microserver

- Cavium

Xeon D vs ThunderX: Supermicro vs Gigabyte

While SoC is literally stands for a system on a chip, in practice it's still just one component of a whole server. A new SoC cannot make it to the market alone; it needs the backing of server vendors to provide the rest of the hardware to go around it and to make it a complete system.

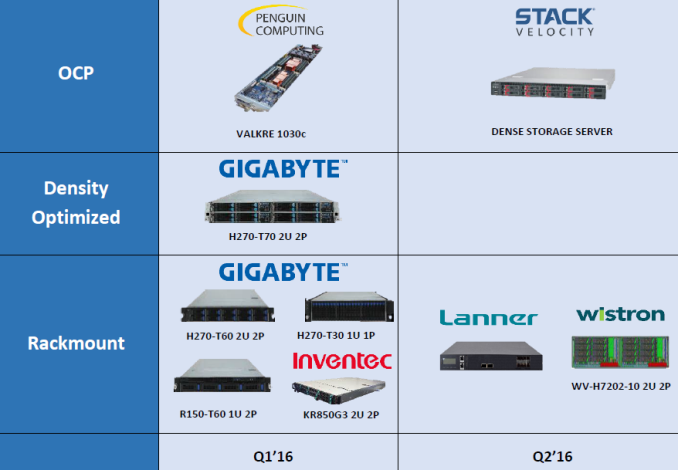

To that end, Gigabyte has adopted Cavium's ThunderX in quite a few different servers. Meanwhile on the Intel side, Supermicro is the company with the widest range of Xeon D products.

There are other server vendors like Pengiun computing and Wistrom that will make use of the ThunderX, and you'll find Xeon D system from over a dozen vendors. But is clear that Gigabyte and Supermicro are the vendors that make the ThunderX and Xeon D available to the widest range of companies respectively.

For today's review we got access to the Gigabyte R120-T30.

Although density is important, we can not say that we are a fan of 1U servers. The small fans in those systems tend to waste a lot of energy.

Eight DIMMs allow the ThunderX SoC to offer up to 512 GB, but realistically 256 GB is probably the maximum practical capacity (8 x 32 GB) in 2016. Still, that is twice as much as the Xeon D, which can be an advantage in caching or big data servers. Of course, Cavium is the intelligent network company, and that is where this server really distinguishes itself. One Quad Small Form-factor Pluggable Plus (QFSP+) link can deliver 40 GB/s, and combined with four 10 Gb/s Small Form-factor Pluggable Plus (SFP+) links, a complete ThunderX system is good for a total of 80 Gbit per second of network bandwidth.

Along with building in an extensive amount of dedicated network I/O, Cavium has also outfit the ThunderX with a large number of SATA host ports, 16 in total. This allows you to use the 3 PCIe 3.0 x8 links for purposes other than storage or network I/O.

That said, the 1U chassis used by the R120-T30 is somewhat at odds with the capabilities of ThunderX here: there are 16 SATA ports, but only 4 hotswap bays are available. Big Data platforms make use of HDFS, and with a typical replication of 3 (each block is copied 3 times) and performance that scales well with the number of disks (and not latency), many people are searching for a system with lots of disk bays.

Finally, we're happy to report that there is no lack of monitoring and remote management capabilities. A Serial port is available for low level debugging and an AST2400 with an out of band gigabit Ethernet port allows you to manage the server from a distance.

82 Comments

View All Comments

willis936 - Thursday, June 16, 2016 - link

Are you sure that the there are more cores at lower clocks to keep voltage lower? Power consumption is proportional to v^2*f.ddriver - Friday, June 17, 2016 - link

Say what? Go back, read my previous post again, and if you are going to respond, make sure it is legible.willis936 - Friday, June 17, 2016 - link

Alright well if you don't understand why many slower cores are more power efficient even if there was a 0 cycle penalty on context switching then you aren't worth having this discussion with.blaktron - Wednesday, June 15, 2016 - link

48 cores of server processing on 16mb of l2 and 4 channels of RAM? What is this thing designed for. Will be like running single channel celerons as server processors, so decent hypervisor hosts are out, and so is any database work more complex than dynamic web pages.Haravikk - Wednesday, June 15, 2016 - link

Facebook is specifically mentioned as being interested in this, so dynamic web-pages is definitely a valid use-case here. HHVM for example is pretty light on memory usage (so is PHP7 now), especially in high demand cases where you're really only running a single set of scripts, probably cached in a compiled form, plus both scale really well across as many cores as you can throw at them.Things like nginx and MariaDB will be the same, so they're absolutely intended use-cases for this kind of chip, and I think it should be very good at it.

blaktron - Wednesday, June 15, 2016 - link

With no L3 and slow RAM access I'm not sure where you think the scrips will cache. Assuming you ran them on bare metal (horrifying waste of compute) there would be enough, but if you had docker instances or quick spin vms doing your work (as 99% of web servers are) then each instance will only get the tiniest slice of cache to work with. It would be like running your servers, as I said, on a bank of celerons. Except celerons have L3 and don't carry 12 cores per memory channel.spaceship9876 - Wednesday, June 15, 2016 - link

Hopefully someone will release a server chip using 64 cortex A73 cpu cores, i'm pretty sure the cortex a73 will be more power efficient than xeon d. Xeon d beats cortex a57 in power efficiency but i'm pretty sure than cortex a72 will be similar and cortex a73 will beat it.Flunk - Wednesday, June 15, 2016 - link

ARM with ambition?I've heard that before, nothing came of it.

CajunArson - Wednesday, June 15, 2016 - link

Interesting article. This does appear to be the first semi-credible part from an ARM server vendor.Having said that, the energy efficiency table at the end should put to rest any misconceived notions that ARM is somehow magically energy efficient while X86 isn't.

Considering that Xeon E5-2690 v3 is a 4.5 year old Sandy Bridge part made on a 32 nm process and it still has better performance-per-watt than the best ARM server parts available in 2016, it's pretty obvious that Intel has done an excellent job with power efficiency.

kgardas - Wednesday, June 15, 2016 - link

2 CajunArson: (1) you can't compare energy efficiency of CPUs made on different nodes. 28nm versus 14nm? This is apple to oranges. (2) Xeon E5-2690 *v3* is Haswell and not Sandy Bridge and it's not 4.5 years definitely.